- Delta Air Lines CEO Ed Bastian said the massive IT outage earlier this month that stranded thousands of customers will cost it $500 million.

- The airline canceled more than 4,000 flights in the wake of the outage, which was caused by a botched CrowdStrike software update and took thousands of Microsoft systems around the world offline.

- Bastian, speaking from Paris, told CNBC’s “Squawk Box” on Wednesday that the carrier would seek damages from the disruptions, adding, “We have no choice.”

499.999.990

Remember that you got your $10 gift card for Uber eats.

Which didn’t work.

It worked but there was a $10 convenience fee.

Technically, it was a $10 gift card for each IT technician, so that could have been a whole $100!

Not so bad after all

No, only the partners did

Bastian said the figure includes not just lost revenue but “the tens of millions of dollars per day in compensation and hotels” over a period of five days. The amount is roughly in line with analysts’ estimates. Delta didn’t disclose how many customers were affected or how many canceled their flights.

It’s important to note that the DOT recently clarified a rule that reinforced that if an airline cancels a flight, they have to compensate the customer. So that’s the real reason why Delta had to spend so much, they couldn’t ignore their customers and had to pay out for their inconvenience.

So think about how much worse it might have been for fliers if a more industry-friendly Transportation Secretary were in charge. The airlines might not have had to pay out nearly as much to stranded customers, and we’d be hearing about how stranded fliers got nothing at all.

Now do Canada.

Our best airline just got bought by pretty much a broadcom, mechs are striking because, well, Canada isn’t an at-will state near Jersey, everyone’s looking to bail because now they have to be the dicks to customers they didn’t like being at the other (national) airline. The whole enshittification enchilada.

Late flights? Check. Missed connections? Check. Luggage? Laughable. And extra. Compensation? “No hablo canadiensis”.

We need that hard rule where they fuck up and they gotta make it rain too.

Like, is it so hard to keep a working but dark airplane in a parking spot for when that flight’s delayed because the lav check valve is jammed? This seems to be basic capacity planning and business continuity. They need to get a clue under their skin or else they get the hose again.

They need to get a clue under their skin or else they get the hose again.

Is that why they call you hosers?

Why do news outlets keep calling it a Microsoft outage? It’s only a crowdstrike issue right? Microsoft doesn’t have anything to do with it?

It’s sort of 90% of one and 10% of the other. Mostly the issue is a crowdstrike problem, but Microsoft really should have it so their their operating system doesn’t continuously boot loop if a driver is failing. It should be able to detect that and shut down the affected driver. Of course equally the driver shouldn’t be crashing just because it doesn’t understand some code it’s being fed.

Also there is an argument to be made that Microsoft should have pushed back more at allowing crowdstrike to effectively bypass their kernel testing policies. Since obviously that negates the whole point of the tests.

Of course both these issues also exist in Linux so it’s not as if this is a Microsoft unique problem.

There’s a good 20% of blame belonging to the penny pinchers choosing to allow third-party security updates without testing environments because the corporation is too cheap for proper infrastructure and disaster recovery architecture.

Like, imagine if there was a new airbag technology that promised to reduce car crashes. And so everyone stopped wearing seatbelts. And then those airbags caused every car on the road to crash at the same time.

Obviously, the airbags that caused all the crashes are the primary cause. And the car manufacturers that allowed airbags to crash their cars bear some responsibility. But then we should also remind everyone that seatbelts are important and we should all be wearing them. The people who did wear their seatbelts were probably fine.

Just because everyone is tightening IT budgets and buying licenses to panacea security services doesn’t make it smart business.

In this case, it’s less like they stopped wearing seatbelts, and more like the airbags silently disabled the seatbelts from being more than a fun sash without telling anyone.

To drop the analogy: the way the update deployed didn’t inform the owners of the systems affected, and didn’t pay attention to any of their configuration regarding update management.

The crowdstrike driver has the boot_critical flag set, which prevents exactly what you describe from happening

Yeah I know but booting in safe mode disables the flag so you can boot even if something is set to critical with it disabled. The critical flag is only set up for normal operations.

The answer is simple: they have no idea what they are talking about. And that is true for almost every topic they are reporting about.

But… the BSOD!

It was a Crowdstrike-triggered issue that only affected Microsoft Windows machines. Crowdstrike on Linux didn’t have issues and Windows without Crowdstrike didn’t have issues. It’s appropriate to refer to it as a Microsoft-Crowdstrike outage.

Funny enough, crowdstrike on Linux had a very similar issue a few months back.

It’s similar. They did cause kernels to crash. But that’s because they hit and uncovered a bug in the ebpf sandboxing in the kernel, which has since been fixed

Are they actually shipping kernel modules? Why is this needed to protect from whatever it is they supposedly protect from?

They need a file io shim. That’s gotta be a module or it’ll be too slow.

deleted by creator

I guess microsoft-crowdstrike is fair, since the OS doesn’t have any kind of protection against a shitty antivirus destroying it.

I keep seeing articles that just say “Microsoft outage”, even on major outlets like CNN.

Microsoft did have an Azure outage the day before that affected airlines. Media people don’t know enough about it to differentiate the two issues.

To be clear, an operating system in an enterprise environment should have mechanisms to access and modify core system functions. Guard-railing anything that could cause an outage like this would make Microsoft a monopoly provider in any service category that requires this kind of access to work (antivirus, auditing, etc). That is arguably worse than incompetent IT departments hiring incompetent vendors to install malware across their fleets resulting in mass-downtime.

The key takeaway here isn’t that Microsoft should change windows to prevent this, it’s that Delta could have spent any number smaller than $500,000,000 on competent IT staffing and prevented this at a lower cost than letting it happen.

Delta could have spent any number smaller than $500,000,000 on competent IT staffing and prevented this at a lower cost than letting it happen.

I guarantee someone in their IT department raised the point of not just downloading updates. I can guarantee they advise to test them first because any borderline competent I.T professional knows this stuff. I can also guarantee they were ignored.

Also, part of the issue is that the update rolled out in a way that bypassed deployments having auto updates disabled.

You did not have the ability to disable this type of update or control how it rolled out.

https://www.crowdstrike.com/blog/falcon-content-update-preliminary-post-incident-report/

Their fix for the issue includes “slow rolling their updates”, “monitoring the updates”, “letting customers decide if they want to receive updates”, and “telling customers about the updates”.

Delta could have done everything by the book regarding staggered updates and testing before deployment and it wouldn’t have made any difference at all. (They’re an airline so they probably didn’t but it wouldn’t have helped if they had).

Delta could have done everything by the book

Except pretty much every paragraph in ISO27002.

That book?

Highlights include:

- ops procedures and responsibilities

- change management (ohh. That’s a good one)

- environmental segregation for safety (ie don’t test in prod)

- controls against malware

- INSTALLATION OF SOFTWARE ON OPERATIONAL SYSTEMS

- restrictions on software installation (ie don’t have random fuckwits updating stuff)

…etc. like, it’s all in there. And I get it’s super-fetch to do the cool stuff that looks great on a resume, but maybe, just fucking maybe, we should be operating like we don’t want to use that resume every 3 months.

External people controlling your software rollout by virtue of locking you into some cloud bullshit for security software, when everyone knows they don’t give a shit about your apps security nor your SLA?

Glad Skippy’s got a good looking resume.

Yes, that book. Because the software indicated to end users that they had disabled or otherwise asserted appropriate controls on the system updating itself and it’s update process.

That’s sorta the point of why so many people are so shocked and angry about what went wrong, and why I said “could have done everything by the book”.

As far as the software communicated to anyone managing it, it should not have been doing updates, and cloudstrike didn’t advertise that it updated certain definition files outside of the exposed settings, nor did they communicate that those changes were happening.

Pretend you’ve got a nice little fleet of servers. Let’s pretend they’re running some vaguely responsible Linux distro, like a cent or Ubuntu.

Pretend that nothing updates without your permission, so everything is properly by the book. You host local repositories that all your servers pull from so you can verify every package change.

Now pretend that, unbeknownst to you, canonical or redhat had added a little thing to dnf or apt to let it install really important updates really fast, and it didn’t pay any attention to any of your configuration files, not even the setting that says “do not under any circumstances install anything without my express direction”.

Now pretend they use this to push out a kernel update that patches your kernel into a bowl of luke warm oatmeal and reboots your entire fleet into the abyss.

Is it fair to say that the admin of this fleet is a total fuckup for using a vendor that, up until this moment, was generally well regarded and presented no real reason to doubt while being commonly used? Even though they used software that connected to the Internet, and maybe even paid for it?People use tools that other people build. When the tool does something totally insane that they specifically configured it not to, it’s weird to just keep blaming them for not doing everything in-house. Because what sort of asshole airline doesn’t write their own antivirus?

Competent IT staffing includes IT management

Delta didn’t download the update, tho. Crowdstrike pushed it themselves.

yes, the incompetence was a management decision to allow an external vendor to bypass internal canary deployment processes.

If you own the network you can prevent anything you want.

The key takeaway here isn’t that Microsoft should change windows to prevent this, it’s that Delta could have spent any number smaller than $500,000,000 on competent IT staffing and prevented this at a lower cost than letting it happen.

Well said.

Sometimes we take out technical debt from the loanshark on the corner.

Honestly, with how terrible Windows 11 has been degrading in the last 8 or 9 months, it’s probably good to turn up the heat on MS even if it isn’t completely deserved. They’re pissing away their operating system goodwill so fast.

There have been some discussions on other Lemmy threads, the tl;dr is basically:

- Microsoft has a driver certification process called WHQL.

- This would have caught the CrowdStrike glitch before it ever went production, as the process goes through an extreme set of tests and validations.

- AV companies get to circumvent this process, even though other driver vendors have to use it.

- The part of CrowdStrike that broke Windows, however, likely wouldn’t have been part of the WHQL certification anyways.

- Some could argue software like this shouldn’t be kernel drivers, maybe they should be treated like graphics drivers and shunted away from the kernel.

- These tech companies are all running too fast and loose with software and it really needs to stop, but they’re all too blinded by the cocaine dreams of AI to care.

They’re pissing away their operating system goodwill so fast.

They pissed it away {checks DoJ v. Microsoft} 25 years ago.

Windows 7 and especially 10 started changing the tune. 10: Linux and Android apps running integrated to the OS, huge support for very old PC hardware, support for Android phone integration, stability improvements like moving video drivers out of the kernel, maintaining backwards compatibility with very old apps (1998 Unreal runs fine on it!) by containerizing some to maintain stability while still allowing old code to run. For a commercial OS, it was trending towards something worth paying for.

I don’t know that Microsoft has OS goodwill. People use it because the apps are there, not because Windows has a good user experience.

The driver is wqhl approved, but the update file was full of nulls and broke it.

Microsoft developed an api that would allow anti malware software to avoid being in ring 0, but the EU deemed it to be anti competitive and prohibited then from releasing it.

I think what I was hearing is that the CrowdStrike driver is WHQL approved, but the theory is that it’s just a shell to execute code from the updates it downloads, thus effectively bypassing the WHQL approval process.

Because Microsoft could have prevented it by introducing proper APIs in the kernel like Linux did when crowdstrike did the same on their Linux solution?

Its sort of like calling the terrorist attack on 911 the day the towers fell.

Although in my opinion, microsoft does have some blame here, but not for the individual outage, more for windows just being a shit system and for tricking people into relying on it.

Pretty sure their software’s legal agreement, and the corresponding enterprise legal agreement, already cover this.

The update was the first domino, but the real issue was the disarray of Delta’s IT Operations and their inability to adequately recover in a timely fashion. Sounds like a customer skimping on their lifecycle and capacity planning so that Ed can get just a bit bigger bonus for meeting his budget numbers.

Negligence can make contracts a little less permanent.

Delta was the only airline to suffer a long outage. That’s why I say Crowdstrike is the kickoff, but the poor, drawn-out response and time to resolve it is totally on Delta.

Idk, crowdstike had a few screwups in their pocket before this one. They might be on the hook for costs associated with an outage caused by negligence. I’m not a lawyer, but I do stand next to one in the elevator.

It breaks down once Delta begins arguing costs directly associated with their poor disaster recovery efforts.

Why is CrowdStrike responsible for Deltas poor practices?

Couldn’t agree more.

And now that this occurred, and cost $500m, perhaps finally some enterprise companies may actually resource IT departments better and allow them to do their work. But who am I kidding, that’s never going to happen if it hits bonuses and dividends :(

We just lost 500 million - we can’t afford that right now! /s

According to The headhunters are constantly trying to recruit me for inappropriate jobs it is starting to get traction with companies and they are starting to actually hire fully skilled it departments. Opposed to the ones merely willing to work for near minimum wage which is what they had before.

In some ways it won’t really make a difference because fully staffed up I.T departments also needs to be listened to by management, and that doesn’t happen often in corporate environments, but still they’ll pay the big bucks so that’s good enough for me.

Fucking lol.

According to The headhunters are constantly trying to recruit me for inappropriate jobs it is starting to get traction with companies and they are starting to actually hire fully skilled it departments. Opposed to the ones merely willing to work for near minimum wage which is what they had before.

In some ways it won’t really make a difference because fully staffed up I.T departments also needs to be listened to by management, and that doesn’t happen often in corporate environments, but still they’ll pay the big bucks so that’s good enough for me.

I wasn’t affected by this at all and only followed it on the news and through memes, but I thought this was something that needed hands-on-keyboard to fix, which I could see not being the fault of IT because they stopped planning for issues that couldn’t be handled remotely.

Was there some kind of automated way to fix all the machines remotely? Is there a way Delta could have gotten things working faster? I’m genuinely curious because this is one of those Windows things that I’m too Macintosh to understand.

All the servers and infrastructure should have “lights out management”. I can turn on a server, reconfigure the bios and install windows from scratch on the other side of the world.

Potentially all the workstations / end point devices would need to be repaired though.

The initial day or two I’ll happily blame on crowdstrike. After that, it’s on their IT department for not having good DR plans.

Hell I just did that with what’s effectively a black box this morning - if it’s critical, it gets done the right way or don’t bother doing it at all.

Edit: Bonus unnecessary word

There was no easy automated way if the systems were encrypted, which any sane organization mandates. So yes, did require hands-on-keyboard. But all the other airlines were up and running much faster, and they all had to perform the same fix.

Basically, in macOS terms, the OS fails to boot, so every system just goes to recovery only, and you need to manually enter the recovery lock and encryption password on every system to delete a file out of /System (which isn’t allowed in macOS because it’s read only but just go with it) before it will boot back into macOS. Hope you had those recorded/managed/backed up somewhere otherwise it’s a complete system reinstall…

So yeah, not fun for anyone involved.

Maybe not.

deleted by creator

No, POOR PLANNING and allowing an external entity the ability to take you down, that’s what did it. Pretend you’re pros, Delta, and be adequate.

Holy halfwit projection, batman.

The stories I could tell about how companies will hire a team to run tests on their digital and physical systems while also limiting access to outside nodes disconnected or screened from their core, primary, IMPORTANT systems.

Kicker is that plenty of people who work for these companies get it. Very rarely does someone in a position to do something about it actually understand. A few thousand dollars and they could have hired a hat or two to run penetration on systems and fixed the vulnerabilities, or at least shored them up so this fucking 000 bug didn’t impact them so harshly.

But naaaaaaah. Gotta cut payroll, brb.

I’m not sure any kind of pentest would prevent crowdstrikes backdoor access to release updates at its own discretion and cadence. The only way to avoid that would be blocking crowdstrike from accessing the Internet but I’d bet they’d 100% brick the host over letting that happen. If anything this is a good lesson in not installing malware to prevent even worse malware. You handed the keys to your security to a party that clearly doesn’t care and paid the price. My reaction to that legal disclaimer of crowdstrikes stating they take no responsibility for anything they do… responsibility is the only reason anyone would buy anything from them (aside from being forced by legal requirements that clearly didn’t have anyone who understood them involved in the legislation).

I know it seems shocking but some companies do and did plan for backup systems in the event their entire windows platform blue screened. Thats why there were some companies that had a hard time with it and some that didnt.

The original poster is correct that Delta should shoulder some of the blame. The outage caused a problem but it was Deltas response that caused 500 million in damages. I’m sure that CrowdPoint didn’t advise Delta to put all their eggs in one basket did they?

Yeah, I agree. My whole comment was basically crowdstrike is liable but companies should reflect and take some accountability for their overreliance on CS.

Bah… you’re right. I’ve just become so disillusioned by the smoke and mirrors. So many critical systems protected by poorly managed file mazes and a prayer that Susan in accounting doesn’t get anything higher than the digital equivalent of a toddler slamming its face onto a keyboard several times email from bos$6&776ggjskbigman@poorlyspelledcompany.bendover because some 13 year old with computer access got clever.

I’m a bit agitated atm, sorry about that.

Don’t worry everyone… Each and everyone of the CEOs involved in this debacle will earn millions this year and next and will eventually retire with more money they could possible spend in 10 lifetimes

If anything, they’ll continue to fall upwards completely deserving even more money

Additionally, don’t worry, they’ll just shift more costs onto the consumer and ultimately widen their profit-margins in no time.

Perhaps Boeing can save the airline industry a little more by lowering the costs of their planes by removing another bolt and jerry-rigging flight software onto an antiquated platform.

Yeah… Maybe don’t put all your IT eggs in one basket next time.

Delta is the one that chose to use Crowdstrike on so many critical systems therefore the fault still lies with Delta.

Every big company thinks that when they outsource a solution or buy software they’re getting out of some responsibility. They’re not. When that 3rd party causes a critical failure the proverbial finger still points at the company that chose to use the 3rd party.

The shareholders of Delta should hold this guy responsible for this failure. They shouldn’t let him get away with blaming Crowdstrike.

So you think Delta should’ve had a different antivirus/EDR running on every computer?

I think what @riskable@programming.dev was saying is you shouldn’t have multiple mission critical systems all using the same 3rd party services. Have a mix of at least two, so if one 3rd party service goes down not everything goes down with it

That sounds easy to say, but in execution it would be massively complicated. Modern enterprises are littered with 3rd party services all over the place. The alternative is writing and maintaining your own solution in house, which is an incredibly heavy lift to cover the entirety of all services needed in the enterprise. Most large enterprises are resources starved as is, and this suggestion of having redundancy for any 3rd party service that touches mission critical workloads would probably increase burden and costs by at least 50%. I don’t see that happening in commercial companies.

As far as the companies go, their lack of resources is an entirely self-inflicted problem, because they’re won’t invest in increasing those resources, like more IT infrastructure and staff. It’s the same as many companies that keep terrible backups of their data (if any) when they’re not bound to by the law, because they simply don’t want to pay for it, even though it could very well save them from ruin.

The crowdstrike incident was as bad as it was exactly because loads of companies had their eggs in one basket. Those that didn’t recovered much quicker. Redundancy is the lesson to take from this that none of them will learn.

As far as the companies go, their lack of resources is an entirely self-inflicted problem, because they’re won’t invest in increasing those resources, like more IT infrastructure and staff.

Play that out to its logical conclusion.

- Our example airline suddenly doubles or triples its IT budget.

- The increased costs don’t actually increase profit it merely increases resiliency

- Other airlines don’t do this.

- Our example airline has to increase ticket prices or fees to cover the increased IT spending.

- Other airlines don’t do this.

- Customers start predominantly flying the other airlines with their cheaper fares.

- Our example airline goes out of business, or gets acquired by one of the other airlines

The end result is all operating airlines are back to the prior stance.

Two big assumptions here.

First, multiple business systems are already being supported, and the OS only incidentally. Assuming double or triple IT costs is very unlikely, but feel free to post evidence to the contrary.

Second, a tight coupling between costs and prices. Anyone that’s been paying attention to gouging and shrinkflation of the past few years of record profits, or the doomsaying virtually anywhere the minimum wage has increased and businesses haven’t been annihilated, would know this is nonsense.

First, multiple business systems are already being supported, and the OS only incidentally. Assuming double or triple IT costs is very unlikely, but feel free to post evidence to the contrary.

The suggestion the poster made was that ALL 3rd party services need to have an additional counterpart for redundancy. So we’re not just talking about a second AV vendor. We have to duplicate ALL 3rd party services running on or supporting critical workloads to meet what that poster is suggesting.

- inventory agents

- OS patching

- security vulnerability scanning

- file and DB level backup

- monitoring and alerting

- remote access management

- PAM management

- secrets management

- config managment

…the list goes on.

Anyone that’s been paying attention to gouging and shrinkflation of the past few years of record profits, or the doomsaying virtually anywhere the minimum wage has increased and businesses haven’t been annihilated, would know this is nonsense.

You’re suggesting the companies simply take less profits? Those company’s board of directors will get annihilated by shareholders. The board would be voted out with their IT improvement plans, and replace with those that would return to profitability.

There is an argument to be made that they IT team and infrastructure isn’t supposed to be an ongoing expense or revenue generation. It’s insurance against catastrophe. And if you wanna pivot to something profit generating then you can reassign them to improve UX or other client impacting things that can result in revenue gain. For example notification systems for flight delays are absolute garbage IMO. I land, I check in my flights app and it doesn’t show any changes to when my flight is departing, I load google and those changes are right there. Or they could add maps for every airport they operate a flight from to their apps. They could streamline the process for booking a replacement flight when your incoming flight is delayed or you missed a connecting flight (i had to walk up to a desk, wait in a queue with dozens of other people for half an hour just to be stampped with a new boarding pass and moved along). They could add an actual notification system for when boarding starts (my turkish air flight at one airport didnt have an intercom so i didnt know it was boarding and missed the fligbt). All of these are just examples but my point is theres an inherent shortsightedness in assuming an investment in IT, especially for a company that deals primairly with interconnectivity, is wasted. This is the reason everything is so sh*tty for users. Companies prefer minimising costs to maximising value to the user even if the latter can generate long term revenue and increase user retention.

customers start predominantly flying the other airlines with cheaper fares

I was with you till this part, except with the way flying is set up in this country, there’s very little competition between airlines. They’ve essentially set themselves up with airports/hubs so if an airline is down for a day, that’s kinda it unless you want to switch to a different airport.

In the USA besides very small cities, this isn’t my experience. My flights out of my home airport are spread across 5 or 6 airlines. My city doesn’t even break into the top ten largest in the nation. As far as domestic destinations, There are usually 3 to 5 airlines available as choices.

Our example airline has to increase ticket prices or fees to cover the increased IT spending.

Or they could just cut already excessive executive bonuses…

You know they’re not going to do that, so how useful is it to suggest that? If we just want to talk about pie-in-the-sky fixes then sure, but at the end of that we’ll likely have nationalized airlines, which that isn’t happening either.

So are we talking about fantasy or things that can actually happen?

In this case, it’s a local third party tool and they thought they could control to cadence of updates. There was no reason to think there was anything particularly unstable about the situation.

This is closer to saying that half of your servers should be Linux and half should be windows in case one has a bug.

Crowdstrike bypassed user controls on updates.

The normal responsible course of action is to deploy an update to a small test environment, test to make sure it doesn’t break anything, and then slowly deploy it to more places while watching for unexpected errors.

Crowdstrike shotgunned it to every system at once without monitoring, with grossly inadequate testing, and entirely bypassed any user configurable setting to avoid or opt out of the update.I was much more willing to put the blame on the organizers that had the outages for failing to follow best practices before I learned that they way the update was pushed would have entirely bypassed any of those safeguards.

It’s unreasonable to say that an organization needs to run multiple copies of every service with different fundamental infrastructure choices for each in case one magics itself broken.

Crowdstrike also bypassed Microsoft’s driver signing as part of their update process, just to make the updates release faster.

That MS is getting any flak for this is just shit journalism.

Adding another reply since I went on a bit of a rant in my other one… You’re actually missing the point I was trying to make: No matter what solution you choose it’s still your fault for choosing it. There are a zillion mitigations and “back up plans” that can be used when you feel like you have no choice but to use a dangerous 3rd party tool (e.g. one that installs kernel modules). Delta obviously didn’t do any of that due diligence.

Kernel module is basically the only way to implement this type of security software. That’s the only thing that has system wide access to realtime filesystem and network events.

Yes, they’re ultimately liable to their customers because that’s how liability works, but it’s really hard to argue that they’re at fault for picking a standard piece of software from a leading vendor that functions roughly the same as every piece of software in this space for every platform functions, which then bypassed all configurations they could make to control updates, grabbed a corrupted update and crashed the computer.

It’s like saying it’s the drivers fault the brakes on their Toyota failed and they crashed into someone. Yes, they crashed and so their insurance is going to have to cover it, but you don’t get angry at the driver for purchasing a common car in good condition and having it break in a way they can’t control.What mitigations should they have had? All computer systems are mostly third party tools. Your OS is a third party tool. Your programming language is a third party tool. Webserver, database, loadbalancer, caching server: all third party tools. Hardware drivers? Usually third party, but USB has made a lot of things more generic.

If your package manager decides to ignore your configuration and update your kernel to something mangled and reboot, your computer is going to crash and it’ll stay down until you can get in there to tell it to stop booting the mangled kernel.

It is absolutely not the only way to implement EDR. Linux has eBPF which is what Crowdstrike and other tools use on Linux instead of a kernel module. A kernel module is only necessary on Windows because Windows doesn’t provide the necessary functionality.

Mitigating factors: Use (and take) regular snapshots and test them. My company had all our virtual desktops restored within half an hour on that day. If you don’t think Windows Volume Shadow Copy is capable or actually useful for that in the real world then you’re making my argument for me! LOL

Another option is to use systems (like Linux) that let you monitor these sorts of EDR things while remaining super locked down. You can run EDR tools on immutable Linux systems! You can’t do that on Windows because (of backwards compatibility!) that OS can’t run properly in an immutable share.

Windows was not made to be secure like that. It’s security contexts are just hacks upon hacks. Far too many things need admin rights (or more privileges!) just to function on a basic level.

OSes like Linux were built to deal with these sorts of things. Linux, specifically, has gone though so many stages of evolution it makes Windows look like a dinosaur that barely survived the asteroid impact somehow.

eBPF, the kernel level tool? Because you need to be in the kernel to have that level of access, which is what I was saying? The one with a bug that crowd strike hit that caused Linux servers to KP?

Yes, I said “kernel module” when I should have said “software executing in a kernel context”. That’s on me.By the way, eBPF? Third party software by most metrics. Developed and maintained by Facebook, Cisco, Microsoft, Google and friends. Also available on windows, albeit not as deeply integrated due to the layers of cruft you mention.

I’m glad you were able to recover your VMs quickly. How quickly were you able to recover your non-virtualized devices, like laptops, desktops or that poor AD server that no one likes?

Airlines need more than just servers to operate. They also need laptops for various ground crew, terminals for the gate crew and ticketing agents, desktops for the people in offices outside the airport who manage “stuff” needed to keep an airline running.You seem to be much more interested in talking about Linux being better than windows, which is a statement I agree with, but it’s quite different from your original point that “Delta is at fault because they used third party tools”.

My point was that it’s unreasonable to say that Delta should have known better than to use a third party tool, while recommending Linux (not written by Delta), whose ecosystem is almost entirely composed of different third parties that you need to trust, either via system software (webserver), holding your critical data (database), kernel code (network card makers usually add support by making a kernel patch), or entire architectural subsystems (eBPF was written by a company that sells services that use it, and a good chunk of the security system was the NSA).

None of that bothers me. I just don’t get how it doesn’t bother you if you don’t trust well regarded vendors in kernel space to have those same vendors making kernel patches.

Sounds like they executed their plans just fine.

And due diligence is “the investigation or exercise of care that a reasonable business or person is normally expected to take before entering into an agreement or contract with another party or an act with a certain standard of care”. Having BC/DR plans isn’t part of due diligence.

If I were in charge I wouldn’t put anything critical on Windows. Not only because it’s total garbage from a security standpoint but it’s also garbage from a stability standpoint. It’s always had these sorts of problems and it always will because Microsoft absolutely refuses to break backwards compatibility and that’s precisely what they’d have to do in order to move forward into the realm of, “modern OS”. Things like NTFS and the way file locking works would need to go. Everything being executable by default would need to end and so, so much more low-level stuff that would break like everything.

Aside about stability: You just cannot keep Windows up and running for long before you have to reboot due to the way file locking works (nearly all updates can’t apply until the process owning them “lets go”, as it were and that process usually involves kernel stuff… due to security hacks they’ve added on since WinNT 3.5 LOL). You can’t make it immutable. You can’t lock it down in any effective way without disabling your ability to monitor it properly (e.g. with EDR tools). It just wasn’t made for that… It’s a desktop operating system. Meant for ONE user using it at a time (and one main application/service, really). Trying to turn it into a server that runs many processes simultaneously under different security contexts is just not what it was meant to do. The only reason why that kinda sort of works is because of hacks upon hacks upon hacks and very careful engineering around a seemingly endless array of stupid limitations that are a core part of the OS.

Please go read up on how this error happened.

This is not a backwards compatibility thing, or on Microsoft at all, despite the flaws you accurately point out. For that matter the entire architecture of modern PCs is a weird hodgepodge of new systems tacked onto older ones.

- Crowdstrike’s signed driver was set to load at boot, edit: by Crowdstrike.

- Crowdstrike’s signed driver was running unsigned code at the kernel level and it crashed. It crashed because the code was trying to read a pointer from the corrupt file data, and it had no protection at all against a bad file.

Just to reiterate: It loaded up a file and read from it at the kernel level without any checks that the file was valid.

- As it should, windows treats any crash at the kernel level as a critical issue. and bluescreens the system to protect it.

The entire fix is to boot into safe mode and delete the corrupt update file crowdstrike sent.

I enjoy hating on Windows as much as the next guy who installed Linux on their laptop once, but the bottom line is 90 percent of businesses use it because it does work.

Blaming the people who made the decision to purchase arguably the most popular EDR solution on the planet and use it (those bastards!) does nothing but show a lack of understanding how any business related IT decisions work.

Alternatively, they could have taken Crowdstrike’s offer of layered rollouts, but Delta declined this and wanted all updates immediately to all devices.

Good. They’ve been stealing from their customers for decades; this is fuckin’ karmic.

Also, maybe don’t put all your eggs into one single basket, from an infrastructure perspective.

Yeah, I say I as migrate another service to Azure…

That’s not just putting all your eggs into a single basket, that’s putting all your eggs into a rotting trashcan

Tell me you haven’t used Azure without telling me you haven’t used Azure.

Is Azure is fine. It is not amazing, it is not terrible, it is fine.

Our c-level team was super excited to announce we were migrating from AWS to Azure. “This is going to be so great for our infrastructure team!” The infrastructure team groaned. “But Azure is so much better!” Yeah, it’s fine. It’s all pros and cons. But migration sucks.

It’s like, we have sales people. We sell software. They know the software sales process. They know that sales is all pros, no cons. They know that the team that took them to dinner and golfing and gave them swag wouldn’t know an API from an APU. They know that migration is a major pain point. Why would they expect enthusiasm from the team that has to do it?

But they’re excited about it. It’s gonna be great.

Tell me you haven’t used Azure without telling me you haven’t used Azure.

That’s how we know we have.

No. The cost of lost profits will just be passed on to you.

I can’t wait to see crowdstrike get liquidated from all of this, MSOFT is getting so much flak when this straight up wasn’t their fault

The reboot 15 times solution, etc it is a bit on their side. But in general I agree, CrowdStrike and the industries that need that kind of service should know better.

Their stock is at +44% since July 2023, they might be fine

Pure gambling

Lawsuits haven’t started yet, too soon. Companies effected by the outage are still running number to see HOW effected they were

Crowdstrike wouldn’t have a business model if the security of Microsoft Windows wasn’t so awful. Microsoft isn’t directly to blame for this, but they’re not blameless either.

Windows defender for enterprise is a strong competitor in that market, and CISO that went with crowdstrike did it because the crowdstrike sales team hosts really great lunches and sponsors lots of sports teams

Why would they be liquidated?

Inability to pay the settlements on the inevitable lawsuits that will be coming their way for halting the world economy for a day

I’m sure their Terms of Service make it clear they have limited liability or need to go to arbitration.

Yea because that always holds up in court. I’m sure every legal team will claim the lack of QA was gross negligence on Crowdstrikes part and that normally allows vast portions of agreements to be nullified as one party clearly didn’t hold up their end of the deal

Sure, but they did send a $10 Uber Eats gift card, so you gotta take that into account.

499,999,990. Could be worse.

Edit: oh I see someone else beat me to it

Make it per affected device and then we can talk.

Good thing they got that $10 Uber Eats card.

Aw that’s a shame. Poor rich company.

deleted by creator

Crowdstrike offers layered rollouts, but some executive declined this because they want the most up to date software at all times.

Not for the rapid update that broke everything.

See post incident report:

How Do We Prevent This From Happening Again?

Software Resiliency and Testing

-

Improve Rapid Response Content testing by using testing types such as:

-

Local developer testing

-

Content update and rollback testing

-

Stress testing, fuzzing and fault injection

-

Stability testing

-

Content interface testing

-

Add additional validation checks to the Content Validator for Rapid Response Content.

-

A new check is in process to guard against this type of problematic content from being deployed in the future.

-

Enhance existing error handling in the Content Interpreter.

Rapid Response Content Deployment

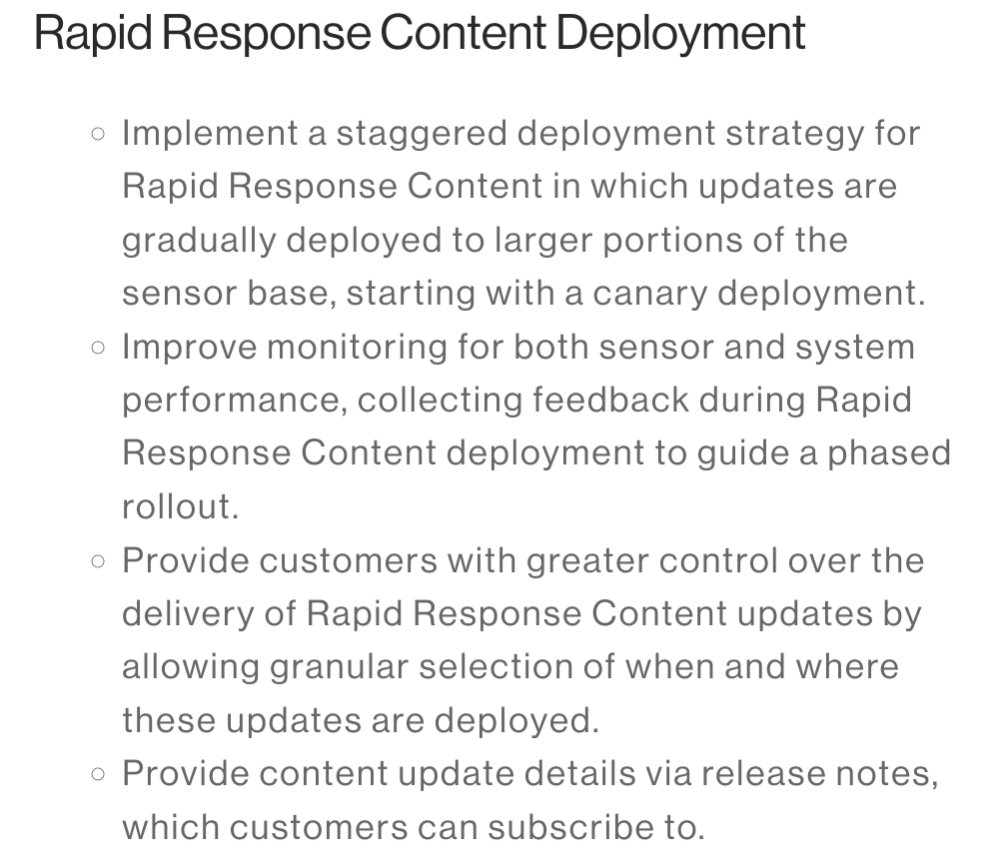

-

Implement a staggered deployment strategy for Rapid Response Content in which updates are gradually deployed to larger portions of the sensor base, starting with a canary deployment.

-

Improve monitoring for both sensor and system performance, collecting feedback during Rapid Response Content deployment to guide a phased rollout.

-

Provide customers with greater control over the delivery of Rapid Response Content updates by allowing granular selection of when and where these updates are deployed.

-

Provide content update details via release notes, which customers can subscribe to.

Source: https://www.crowdstrike.com/falcon-content-update-remediation-and-guidance-hub/

-

CNBC Media Bias Fact Check Credibility: [High] (Click to view Full Report)

CNBC is rated with High Creditability by Media Bias Fact Check.

Bias: Left-Center

Factual Reporting: Mostly Factual

Country: United States of America

Full Report: https://mediabiasfactcheck.com/cnbc/Check the bias and credibility of this article on Ground.News

Thanks to Media Bias Fact Check for their access to the API.

Please consider supporting them by donating.Footer

Media Bias Fact Check is a fact-checking website that rates the bias and credibility of news sources. They are known for their comprehensive and detailed reports.

Beep boop. This action was performed automatically. If you dont like me then please block me.💔

If you have any questions or comments about me, you can make a post to LW Support lemmy community.