- cross-posted to:

- technology@lemmit.online

- cross-posted to:

- technology@lemmit.online

Over half of all tech industry workers view AI as overrated::undefined

Best assessment I’ve heard: Current AI is an aggressive autocomplete.

I’ve found that relying on it is a mistake anyhow, the amount of incorrect information I’ve seen from chatgpt has been crazy. It’s not a bad thing to get started with it but it’s like reading a grade school kids homework, you need to proofread the heck out of it.

What always strikes me as weird is how trusting people are of inherently unreliable sources. Like why the fuck does a robot get trust automatically? It’s a fuckin miracle it works in the first place. You double check that robot’s work for years and it’s right every time? Yeah okay maybe then start to trust it. Until then, what reason is there not to be skeptical of everything it says?

People who Google something and then accept whatever Google pulls out of webpages and puts at the top as fact… confuse me. Like all machines, there are failures. Why would we trust that the opposite is true?

At least a Google search gets you a reference you can point at. It might be wrong, it might not. Maybe it points to other references that you can verify.

ChatGPT outright makes shit up and there’s no way to see how it came to a given conclusion.

That’s a good point… So long as you follow the links and read more. My girlfriend for example, often doesn’t

Because the average person hears “AI” and thinks Cortana/Terminator, not a bunch of if statements.

People are dumb when it comes to things they don’t understand. I’m dumb when it comes to mechanical engineering of any kind, but I’m competent with software. It’s all about where people’s strengths lie, but some people aren’t aware enough to know they don’t know something

My guess, wholly lacking any scientifc rigor, is that humans naturally trust each other. We don’t assume the info someone shares with us as wrong, unless there’s “a reason” to doubt. Chatting with any of these LLM bots feels like talking to a person (most of the time), so there’s usually “no reason” to doubt what it spews.

If human trust wasn’t so easy to get and abuse, many scams would be much harder to pull.

I think you might be onto something. Thanks for sharing!

People trust a squid predicting football matches.

I feel like the AI in self-driving cars is the same way. They’re like driving with a 15 year old that just got their learners permit.

Turns out that getting a computer to do 80% of a good job isn’t so great. It’s that extra 20% that makes all the difference.

That 80% also doesn’t take that much effort. Automation can still be helpful depending on how much effort it is to repeatedly do it, but that 20% is really where we need to see progress for a massive innovation to happen.

I actually disagree. Ai is great at doing the parts that are easy to do mentally but still take time to do. This “fancy autocomplete” is where it shines and can accelerate the work of a professional by an order of magnitude

I just reviewed a PR today and the code was… bad, like unusually bad for mycoworkers and left some comments.

Then my coworker said he used chatgpt without really thinking on what he was copypasting.

I have found that it’s like having a junior programmer assistant. It’s great for “write me python code for opening an in file from a command line argument, reading the contents into a key/value dict array, then closing the file.” It’s terrible for “write me a python code for pulling data into a redis database.”

I find it’s wrong 50% of the time for certain command line switches, Linux file structure, and aws cli.

I find it’s terrible for advanced stuff like, “using aws cli and jq, take all volumes in a vpc, and display the volume id, volume size in gb, instance id it’s attached to, private IP address of the instance, whether is a gp3 or gp2, and the vpc id in a comma separated format, sorted by volume size.”

Even worse at, “take all my gp2 volumes and make them gp3.”

I recently used it to update my resume with great success. But I also didn’t just blindly trust it.

Gave it my resume and then asked it to edit my resume to more closely align with a guide I found on Harvards website. Gave it the guide as well and it spit out a version of mine that much more closely resembled the provided guide.

Spent roughly 5 minutes editing the new version to correct for any problems it had and boom. Half an hour of worked parsed down to sub 10

I then had it use my new resume (I gave it a copy of the edited version) and asked it to write me a cover letter for a job (I provided the job description)

Boom. Cover letter. I spent about 10 minutes editing that piece. And then that new resume and cover letter lead to an interview and subsequent job offer.

AI is a tool not an all in one solution.

And that’s entirely correct

No. It’s not and hasn’t been for at least a year. Maybe the ai your dealing with is, but it’s shown understanding of concepts in ways that make no sense for how it was created. Gotta go.

it’s shown understanding of concepts

No it hasn’t.

It does a shockingly good analogue of “understanding” at the very least. Have you tried asking chatgpt to solve analogies? Those show up in all kinds of intelligence tests.

We don’t have agi, definitely, but this stuff has come a very long way and it’s quite close to being genuinely useful.

Even if we completely reject the “it’s ai,” we more or less have a natural language interface for computers that isn’t a shallow trick and that’s awesome.

Have you tried asking chatgpt to solve analogies? Those show up in all kinds of intelligence tests.

This two statements are causal to each other. And it actually gets them wrong with some frequency in ways that humans wouldn’t, forgets stuff it has already “learned”, or changes to opposite stances midways sentences. Because it is just an excel sheet on steroids.

It is, in my opinion, a shallow trick indeed.

“Excel sheet on steroids” isn’t oversimplification: it’s just incorrect. But it doesn’t really sound like you’re particularly open to honest discussion about this so whatever.

Well here’s the question. Is it solving them, or just regurgitating the answer? If it solves them it should be able to accurately solve completely novel analogies.

Novel analogies. Very easy to prove this independently for yourself.

yes, it’s has. the most famous example is the stacking of the laptop and the markers. you may not have access but it’s about to eclipse us imho. I’m no technological fanboy either. 20 years ago I argued that I wouldn’t be possible to understand human speech. now that is a everyday occurrence.

Depends on how you define understanding and how you test for it.

I assume we are talking LLM here?

Maybe if you Interpret it’s output as such.

It’s a tool. And like any tool it’s only as good as the person using it. I don’t think these people are very good at using it.

Nice one! I have heard it called a fuzzy JPG of the internet.

Too bad it’s bullshit.

If you are actually interested in the topic, here’s a few good reads:

-

Do Large Language Models learn world models or just surface statistics? (Jan 2023)

-

Actually, Othello-GPT Has A Linear Emergent World Representation (Mar 2023)

-

Eight Things to Know about Large Language Models (April 2023)

-

Playing chess with large language models (Aug 2023)

-

Language Models Represent Space and Time (Oct 2023)

As you can see, the past year has shed a lot of light on the topic.

One of my favorite facts is that it takes on average 17 years before discoveries in research find their way to the average practitioner in the medical field. While tech as a discipline may be more quick to update itself, it’s still not sub-12 months, and as a result a lot of people are continuing to confidently parrot things that have recently been shown in research circles to be BS.

-

Over half of tech industry workers have seen the “great demo -> overhyped bullshit” cycle before.

You just have to leverage the agile AI blockchain cloud.

Once we’re able to synergize the increased throughput of our knowledge capacity we’re likely to exceed shareholder expectation and increase returns company wide so employee defecation won’t be throttled by our ability to process sanity.

Sounds like we need to align on triple underscoring the double-bottom line for all stakeholders. Let’s hammer a steak in the ground here and craft a narrative that drives contingency through the process space for F24 while synthesising synergy from a cloudshaping standooint in a parallel tranche. This journey is really all about the art of the possible after all so lift and shift a fit for purpose best practice and hit the ground running on our BHAG.

<3

What is this and how can I invest

I’m calling HR

😜

Don’t forget to make it connected to every device, ever

AIot?

Every billboard in SF is just these words shuffled

No SQL, block chain, crypto, metaverse, just to name a few recent examples.

AI is overhyped, but it is, so far, more useful than any of those other examples, though.

These are useful technologies if used when called for. They aren’t all in one solutions like the smart phone killing off cameras, pdas, media players… I think if people looked at them as tools which fix specific problems, we’d all be happier.

Every year sometimes.

Largely because we understand that what they’re calling “AI” isn’t AI.

This is a growing pet peeve of mine. If and when actual AI becomes a thing, it’ll be a major turning point for humanity comparable to things like harnessing fire or electricity.

…and most people will be confused as fuck. “We’ve had this for years, what’s the big deal?” -_-

I also believe that will happen! We will not be prepared since many don’t understand the differences between what current models do and what an actual general AI could potentially do.

It also saddens me that many don’t know or ignore how fundamental abstract reasoning is to our understanding of how human intelligence works. And that LLMs simply aren’t intelligent in that sense (or at all, if you take a tight definition of intelligence).

I don’t get how recognizing a pattern is not AI. It recognizes patterns in data, and patterns in side of patterns, and does so at a massive scale. Humans are no different, we find patterns and make predictions on what to do next.

The human brain does not simply recognise patterns, though. Abstract reasoning means that humans are able to find solutions for problems they did not encounter before. That’s what makes a thing intelligent. It is not fully understood yet what exactly gives the brain these capabilities, btw. Like, we also do not understand yet how it is possible that we can recognize our own thinking processes.

The most competent current AI models mimic one aspect of the brain which is neural pathways. In our brain it’s an activity threshold and in a neural network AI it’s statistics which decide whether a certain path is active or not and then it crosses with other paths, etc. Like a very complex decision tree.

So that is quite similar between AI and brains. But we actually get something like an understanding of concepts that goes beyond the decision tree but isn’t fully understood yet, as described above.

For an AI to be actually intelligent it would probably need to at least get this ability, to trace back it’s own way through the decision tree. Maybe it even turns out that you in fact do need a consciousness to have reason.

This abstract thinking… is pattern recognition. Patterns of behavior, patterns of series of actions, patterns of photons, patterns of patterns.

And there is one, I think only, concept of consciousness. And it is that it’s another layer of pattern recognition. A pattern recognizer that looks into the patterns of your own mind.

I’m unfortunately unsure how else to convey this because it seems so obvious to me. I’d need to take quite some time to figure out how to explain it any better.

Please do, but I don’t understand why you believe that it changes things? Pattern recognition is the modus operandi of a brain, or rather the connection between your senses and your brain. So perhaps could be seen more like the way “brain data” is stored, its data type.The peculiarity is how the data type is used.

This may turn philosophical, but consider you would have the perfect pattern recognition apparatus. It would see one pattern, the ultimate pattern how everything is exactly connected. Does that make it intelligent?

To be called intelligent, you would want to be able to ask the apparatus about specific problems (much smaller chunks of the whole thing). While it may still be confined to the data type throughout the whole process, the scope of its intelligence would be defined by the way it uses the data.

See, I like this question, “what is intelligence?”

I feel way too many people are so happy to make claims about what is or isn’t intelligent without ever attempting to define intelligence.

Honestly, I’m not sure what constitutes “intelligence”, the best I can come up with is the human brain. But when I try to differentiate the brain from a computer, I just keep seeing all the similarities. The differences that are there, seem reasonable to expect a computer to replicate… eventually.

Anyway, I’ve been working off of the idea that all that reacts to stimulus is intelligent. It’s all a matter of degree and type. I’m talking bacteria, bugs, humans, plants, maybe even planets.

I’ve had exactly this discussion with a friend recently. I share your opinion, he shared what seems to be the view of the majority here. I just don’t see what the qualitative difference between the brain and a data-based AI would be. It almost seems to me like people have problems accepting the fact that they’re not more than biological machines. Like there must be something that makes them special, that gives them some sort of “soul” even when it’s in a non-religious and non-spiritual way. Some qualitative difference between them and the computer. I don’t think there necessarily is one. Look at how many things people get wrong. Look at how bad we are at simple logic sometimes. We have a better sense of some things like plausibility because we have a different set of experiences that is rooted in our physical life. I think it’s entirely possible that we will be able to create robots that are more similar to human beings than we’d like them to be. I even think it’s possible that they would have qualia. I just don’t see why not.

I know that there is a debate about machine learning AI and symbolic AI. I’m not an expert to be fair, but I have not seen any possible explanation as to why only symbolic AI would be “true” AI, even though many people seem to believe that.

As in AGI?

I’ve seen it refered to as AGI bit I think itns wrong. Chat GPT isnt intelligent in the slightest, it only makes guesses on what word is statistically more likely to come up next. There is no thikinking or problem solving involved.

A while ago I saw an article that with a tittle along the lines of “spark of AGI in ChatGPT 4” because it chose to use a calculator tool when facing a problme that required one. That would be AI (and not AGI). It has a problem, it learns and uses available tools to solve it.

AGI would be on a whole other level.

Edit: Grammar

The argument “it just predicts the most likely next word” while true massively under values what it even means to predict the next word or token. Largely these predictions are based on sentences and ideas the model has trained on from its data sets. It’s pretty intelligent if you think about it. You read a text book then when you apply the knowledge or take a test you use what you read to form a new sentence in relation to the context of the question or problem. For the models “text prediction” to be correct it has to understand certain relationships between complex ideas and objects to some capacity. Yes it absolutely is not as good as human intelligence. But what it’s doing is much more advanced then text to type on your phone keyboard. It’s a step in the right direction, over hyped right now but the hype is funneling cash into research. The models are already getting more advanced. Right now half of what it says is hot garbage but it can be pretty accurate.

Right? Like, I, too, predict the next word in my sentence to properly respond to inputs with desired output. Sure I have personality (usually) and interests, but that’s an emergent behavior of my intelligence, not a prerequisite.

It might not formulate thoughts the way we do, but it absolutely emulates some level of intelligence, artificially.

I think so many people overrate human intelligence, thus causing them to underrate AI. Don’t get me wrong, our brains are amazing, but they’re also so amazing that they can make crazy cool AI that is also really amazing.

People just hate the idea of being meat robots, I don’t blame em.

No, INT.

What is that?

A dump stat for STR characters.

AI doesn’t necessarily mean human-level intelligence, if that’s what you mean. The AI field has wrestled with this for decades. There can be “strong AI”, which is aiming for that human-level intelligence, but that’s probably a far off goal. The “weak AI” is about pushing the boundaries of what computers can do, and that stuff has been massively useful even before we talk about the more modern stuff.

Sounds like people here are expecting to see GPAI and singularity stuff, but all they see is a pitiful LLM or other even more narrow AI applications. Remember, even optical character recognition (OCR) used to be called AI until it became so common that it wasn’t exciting any more. What AI developers call AI today, is just basic automation and few decades later.

It absolutely is AI. A lot of stuff is AI.

It’s just not that useful.

The decision tree my company uses to deny customer claims is not AI despite the business constantly referring to it as such.

There’s definitely a ton of “AI” that is nothing more than an If/Else statement.

for many years AI referred to that type of technology. It is not infact AGI but AI historically in the technical field refers more towards decision trees, and classification/ linear regression models.

That’s basically what video game AI is, and we’re happy enough to call it that

Well… it’s a video game. We also call them “CPU” which is also entirely inaccurate.

That’s called an expert system, and has been commonly called a form of AI for decades.

That is indeed what most of it is, my company was doing “sentiment analysis” and it was literally just checking it against a good and bad word list

When someone corporate says “AI” you should hear “extremely rudimentary machine learning” until given more details

It’s useful at sucking down all the compute we complained crypto used

Yeah it’s funny how that little tidbit just went quietly into the bin not to talked about again.

The main difference is that crypto was/is burning huge amounts of energy to run a distributed ponzi scheme. LLMs are at least using energy to create a useful tool (even if there is discussion over how useful they are).

I argue AI is much easier to pull a profit from than a currency exchange also 🙂

You really should listen rather than talk. This is not AI, it’s just a word prediction model. The media calls it AI because it sells and the companies calls it AI because it brings the stock value up.

Yes, what you’re describing is also AI.

Then we may as well call the field of statistics AI now, but sure, it’s a crazy world. :)

There are significant differences between statistical models and AI.

I work for an analytics department at a fortune 100 company. We have a very clear delineation between what constitutes a model and what constitutes an AI.

That’s true. Statistical models are very carefully engineered and tested and current machine learning models are created by throwing a lot of training data at the software and hope for the best that the things that the model learns are not complete bullshit.

Yeah, an AI is a model you can’t explain.

Optimizing compilers came directly out of AI research. The entirety of modern computing is built on things the field produced.

deleted by creator

Yup. LLM RAG is just search 2.0 with a GPU.

For certain use cases it’s incredible, but those use cases shouldn’t be your first idea for a pipeline

Given that AI isn’t purported to be AGI, how do you define AI such that multimodal transformers capable of developing abstract world models as linear representations and trained on unthinkable amounts of human content mirroring a wide array of capabilities which lead to the ability to do things thought to be impossible as recently as three years ago (such as explain jokes not in the training set or solve riddles not in the training set) isn’t “artificial intelligence”?

THANK YOU! I’ve been saying this a long time, but have just kind of accepted that the definition of AI is no longer what it was.

I think it will be the next big thing in tech (or “disruptor” if you must buzzword). But I agree it’s being way over-hyped for where it is right now.

Clueless executives barely know what it is, they just know they want it get ahead of it in order to remain competitive. Marketing types reporting to those executives oversell it (because that’s their job).

One of my friends is an overpaid consultant for a huge corporation, and he says they are trying to force-retro-fit AI to things that barely make any sense…just so that they can say that it’s “powered by AI”.

On the other hand, AI is much better at some tasks than humans. That AI skill set is going to grow over time. And the accumulation of those skills will accelerate. I think we’ve all been distracted, entertained, and a little bit frightened by chat-focused and image-focused AIs. However, AI as a concept is broader and deeper than just chat and images. It’s going to do remarkable stuff in medicine, engineering, and design.

Personally, I think medicine will be the most impacted by AI. Medicine has already been increasingly implementing AI in many areas, and as the tech continues to mature, I am optimistic it will have tremendous effect. Already there are many studies confirming AI’s ability to outperform leading experts in early cancer and disease diagnoses. Just think what kind of impact that could have in developing countries once the tech is affordably scalable. Then you factor in how it can greatly speed up treatment research and it’s pretty exciting.

That being said, it’s always wise to remain cautiously skeptical.

The bad part is health insurance companies are also using AI.

Common US healthcare L

I’s ability to outperform leading experts in early cancer and disease diagnoses

It does, but it also has a black box problem.

A machine learning algorithm tells you that your patient has a 95% chance of developing skin cancer on his back within the next 2 years. Ok, cool, now what? What, specifically, is telling the algorithm that? What is actionable today? Do we start oncological treatment? According to what, attacking what? Do we just ask the patient to aggressively avoid the sun and use liberal amounts of sun screen? Do we start a monthly screening, bi-monthly, yearly, for how long do we keep it up? Should we only focus on the part that shows high risk or everywhere? Should we use the ML every single time? What is the most efficient and effective use of the tech? We know it’s accurate, but is it reliable?

There are a lot of moving parts to a general medical practice. And AI has to find a proper role that requires not just an abstract statistic from an ad-hoc study, but a systematic approach to healthcare. Right now, it doesn’t have that because the AI model can’t tell their handlers what it is seeing, what it means, and how it fits in the holistic view of human health. We can’t just blindly trust it when there’s human lives in the line.

As you can see, this seems to be relegating AI to a research role for the time being, and not on a diagnosing capacity yet.

There is a very complex algorithm for determining your risk of skin cancer: Take your age … then add a percent symbol after it. That is the probability that you have skin cancer.

You are correct, and this is a big reason for why “explainable AI” is becoming a bigger thing now.

Like you say, “AI” isn’t just LLMs and making images. We have previously seen, for example, expert systems, speech recognition, natural language processing, computer vision, machine learning, now LLM and generative art.

The earlier technologies have gone through their own hype cycles and come out the other end to be used in certain useful ways. AI has no doubt already done remarkable things in various industries. I can only imagine that will be true for LLMs some day.

I don’t think we are very close to AGI yet. Current AI like LLMs and machine vision require a lot of manual training and tuning. As far as I know, few AI technologies can learn entirely on their own and those that do are limited in scope. I’m not even sure AGI is really necessary to solve most problems. We may do AI “ala carte” for many years and one day someone will stitch a bunch of things together, et voila.

Thanks.

I’m glad you mentioned speech. Tortoise-TTS is an excellent text to speech AI tool that anyone can run on a GPU at home. I’ve been looking for a TTS tool that can generate a more natural -sounding voice for several years. Tortoise is somewhat labor intensive to use for now, but to my ear it sounds much better than the more expensive cloud-based solutions. It can clone voices convincingly, too. (Which is potentially problematic).

Ooh thanks for the heads up. Last time I played with TTS was years ago using Festival, which was good for the time. Looking forward to trying Tortoise TTS.

Honestly I believe AGI is currently a compute resource problem less than a software problem. A paper came out awhile ago showing that individual neurons in the human brain displayed behavior like decently sized deep learning models. If this is true the number of nodes required for artificial neural nets to even come close to human like intelligence maybe astronomically higher then predicted.

That’s my understanding as well, our brain is just an insane composition of incredibly simple mechanisms. Its compositions of compositions of compositions ad nauseam. We are manually simulating billions of years of evolution, using ourselves as a blueprint. We can get there… it’s hard to say when we’ll get there, but it’ll be interesting to watch.

Exactly, plus human consciousness might not be the most effective way to do it, might be easier less resource intensive ways.

It is overrated. At least when they look at AI as some sort of brain crutch that redeems them from learning stuff.

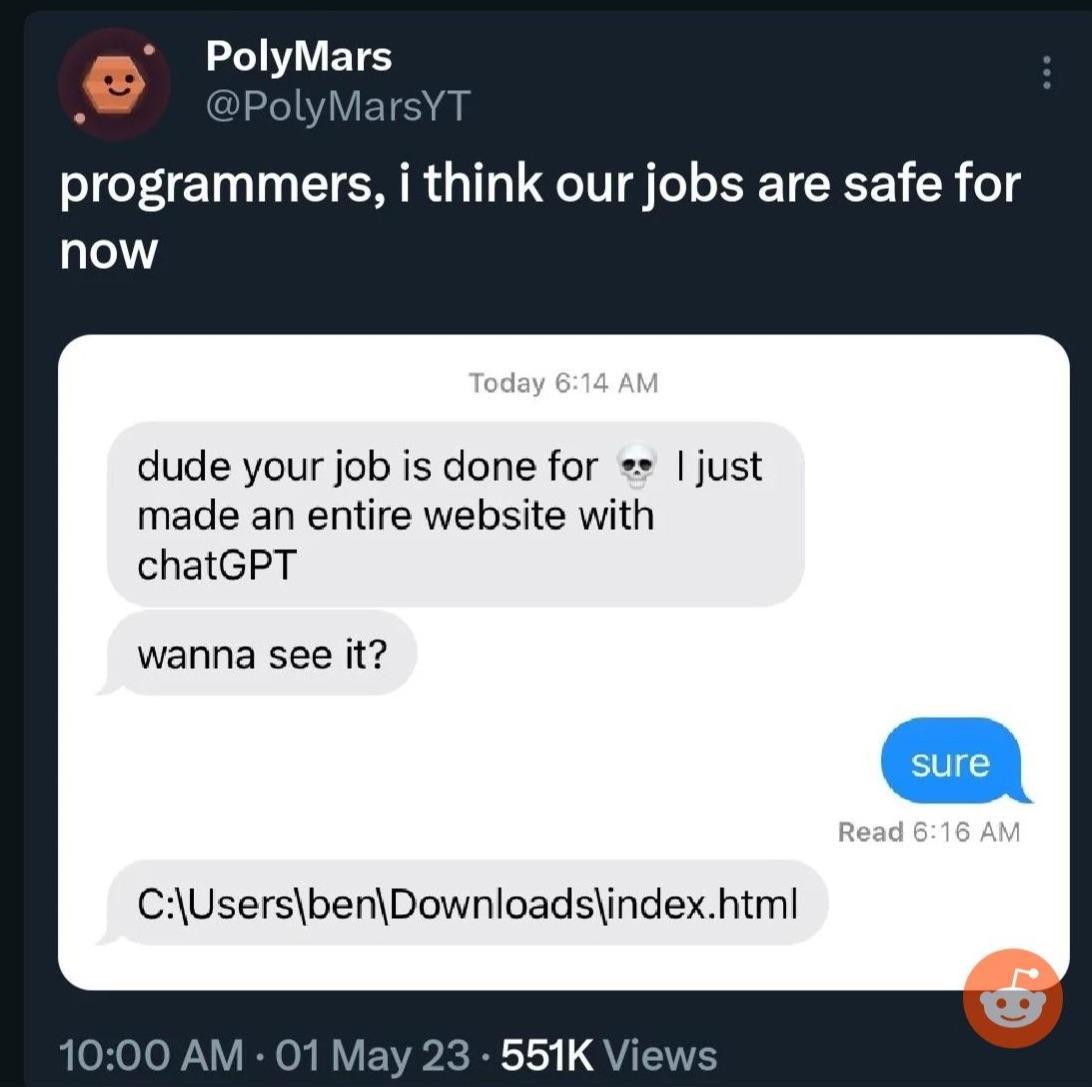

My boss now believes he can “program too” because he let’s ChatGPT write scripts for him that more often than not are poor bs.

He also enters chunks of our code into ChatGPT when we issue bugs or aren’t finished with everything in 5 minutes as some kind of “Gotcha moment”, ignoring that the solutions he then provides don’t work.

Too many people see LLMs as authorities they just aren’t…

It bugs me how easily people (a) trust the accuracy of the output of ChatGPT, (b) feel like it’s somehow safe to use output in commercial applications or to place output under their own license, as if the open issues of copyright aren’t a ten-ton liability hanging over their head, and © feed sensitive data into ChatGPT, as if OpenAI isn’t going to log that interaction and train future models on it.

I have played around a bit, but I simply am not carefree/careless or am too uptight (pick your interpretation) to use it for anything serious.

Too many people see LLMs as authorities they just aren’t…

This is more a ‘human’ problem than an ‘AI’ problem.

In general it’s weird as heck that the industry is full force going into chatbots as a search replacement.

Like, that was a neat demo for a low hanging fruit usecase, but it’s pretty damn far from the ideal production application of it given that the tech isn’t actually memorizing facts and when it gets things right it’s a “wow, this is impressive because it really shouldn’t be doing a good job at this.”

Meanwhile nearly no one is publicly discussing their use as classifiers, which is where the current state of the tech is a slam dunk.

Overall, the past few years have opened my eyes to just how broken human thinking is, not as much the limitations of neural networks.

It is overrated. It has a few uses, but it’s not a generalized AI. It’s like calling a basic calculator a computer. Sure it is an electronic computing device and makes a big difference in calculating speed for doing finances or retail cashiers or whatever. But it’s not a generalized computing system that can basically compute anything that it’s given instructions for which is what we think of when we hear something is a “computer”. It can only do basic math. It could never be used to display a photo , much less make a complex video game.

Similarly the current thing that’s called “AI”, can learn in a very narrow subject that it is designed for. It can’t learn just anything. It can’t make inferences beyond the training material or understand. It can’t create anything totally new, it just remixes things. It could never actually create a new genre of games with some kind of new interface that has never been thought of, or discover the exact mechanisms of how gravity works, since those things aren’t in its training material since they don’t yet exist.

Some calculators can run DooM, though

Lol, those are different. I meant like a little solar powered addition, subtraction, multiplication, division and that’s it kind of calculator.

Many areas of machine learning, particularly LLMs are making impressive progress but the usual ycombinator techbro types are over hyping things again. Same as every other bubble including the original Internet one and the crypto scams and half the bullshit companies they run that add fuck all value to the world.

The cult of bullshit around AI is a means to fleece investors. Seen the same bullshit too many times. Machine learning is going to have a huge impact on the world, same as the Internet did, but it isn’t going to happen overnight. The only certain thing that will happen in the short term is that wealth will be transferred from our pockets to theirs. Fuck them all.

I skip most AI/ChatGPT spam in social media with the same ruthlessness I skipped NFTs. It isn’t that ML doesn’t have huge potential but most publicity about it is clearly aimed at pumping up the market rather than being truly informative about the technology.

ML has already had a huge impact on the world (for better or worse), to the extent that Yann LeCun proposes that the tech giants would crumble if it disappeared overnight. For several years it’s been the core of speech-to-text, language translation, optical character recognition, web search, content recommendation, social media hate speech detection, to name a few.

ML based handwriting recognition has been powering postal routing for a couple of decades. ML completely dominates some areas and will only increase in impact as it becomes more widely applicable. Getting any technology from a lab demo to a safe and reliable real world product is difficult and only more so when there are regulatory obstacles and people being dragged around by vehicles.

For the purposes of raising money from investors it is convenient to understate problems and generate a cult of magical thinking about technology. The hype cycle and the manipulation of the narrative has been fairly obvious with this one.

Reality: most tech workers view it as fairly rated or slightly overrated according to the real data: https://www.techspot.com/images2/news/bigimage/2023/11/2023-11-20-image-3.png

Which is fair. AI at work is great but it only does fairly simple things. Nothing i can’t do myself but saves my sanity and time.

It’s all i want from it and it delivers.

Helps me write hacky scripts to solve one off problems. Honestly, it saves me a few work days.

But it’s far from replacing anybody.

You say it’s “far” but 70 years ago a simple calculator was the size of a house. The power of my desktop from 10 years ago is beat by my phone, hell maybe even my watch.

You know, you code, compute is improving rapidly, and even when it slows vertical scaling, it’s still horizontally scaling. All the while software is getting more efficient, developing new capabilities and techniques which only bring on even more innovation.

It compounds. At this point I think the only limiting factor is how much faith the rich and powerful put in AI’s ability to make them richer. The more they invest, the faster it’ll grow.

Slightly overrated is where I would put it, absolutely. It’s overhyped, but god if the recent advancements aren’t impressive.

I remember when it first came out I asked it to help me write a MapperConfig custom strategy and the answer it gave me was so fantastically wrong - even with prompting - that I lost an afternoon. Honestly the only useful thing I’ve found for it is getting it to find potential syntax errors in terraform code that the plan might miss. It doesn’t even complement my programming skills like a traditional search engine can do; instead it assumes a solution that is usually wrong and you are left to try to build your house on the boilercode sand it spits out at you.

It’s a general problem with ChatGPT(free), the more obscure the topic, the more useless the answers will be. It works pretty good for Wikipedia-style general knowledge, but everything that goes even a little deeper is a mess. This is true even when it comes to things that shouldn’t be that obscure, e.g. pop-culture things like movies. It can give you a summary of StarWars, but anything even a little more outside the mainstream it makes up on the spot.

How much better is ChatGPT-Pro when it comes to this? Can it answer /r/tipofmytongue/ style question?

I’ve found the free one can sometimes answer tip of my tongue questions but yeah anything even remotely obscure it will just lie and say that doesn’t exist, especially if you stray a little too close to the puritanical guard rails. One time I was going down a rabbit hole researching human sex organ variations and it flat out told me the people in South America who grow a penis at 12 don’t exist until I found the name guevedoces on my own, and wouldn’t you know it then it knew what I was talking about.

Have you used copilot? I find it to be fantastically useful.

I also have tried to use it to help with programming problems, and it is confidently incorrect a high percentage (50%) of the time. It will fabricate package names, functions, and more. When you ask it to correct itself, it will give another confidently incorrect answer. Do this a few more times and you could end up with it suggesting the first incorrect answer it gave you and then you realize it is literally leading you in circles.

It’s definitely a nice option to check something quickly, and it has given me some good information, but you really can’t blindly trust its output.

At least with programming, you can validate fairly quickly that it is giving bad information. With other real-life applications, using it for cooking/baking, or trip planning, the consequences of bad information could be quite a bit worse.

id say its like the dotcom bubble.

yeah its incredible new & emerging tech,

but that doesnt mean it isnt overhyped.I mean the dotcom bubble was overhyped, it was a bubble

That’s what they said.

The internet was revolutionary, but dotcom was overhyped at the time.

It also produced useful things. Both statements are true, and are true of the deep learning models around now.

What’s the dotcom bubble?

a bubble is kind of a goldrush situation,

where businesses and investors on mass

jump into a new / hyped market or asset type without a propper plan & buisness model.for example the first recorded one: the tulip mania

the dot-com bubble was a massive bubble in the 90s centered arrount the emerging concept of “internet buissneses”

I have a doctorate in computer engineering, and yeah it’s overhyped to the moon.

I’m oversimplifying it and some one will ackchyually me but once you understand the core mechanics the magic is somewhat diminished. It’s linear algebra and matrices all the way down.

We got really good at parallelizing matrix operations and storing large matrices and the end result is essentially “AI”.

Big emphasis on the ‘A’

That’s because it is overrated and the people in the tech industry are actually qualified to make that determination. It’s a glorified assistant, nothing more. we’ve had these for years, they’re just getting a little bit better. it’s not gonna replace a network stack admin or a programmer anytime soon.

There is a lot of marketing about how it’s going to disrupt every possible industry, but I don’t think that’s reasonable. Generative AI has uses, but I’m not totally convinced it’s going to be this insane omni-tool just yet.

whenever we have new technology, there will always be folks flinging shit on the walls to see what sticks. AI is no exception and you’re most likely correct that not every problem needs an AI-powered solution.

Sure, it is already changing some fields, and more and more fields are beginning to feel the impact in the coming decades. However, we’re still pretty far from a true GPAI, so letting the AI do all the work isn’t going to happen any time soon.

Garbage in, garbage out still applies here. If we don’t collect data in the appropriate way, you can’t expect to teach a model with that. Once we start collecting data with ML in mind, that’s when things start changing quickly. Currently, we have lots and lots of garbage data about everything, and that’s why we aren’t using AI to more.

People who use ChatGPT to program for them deserve their programs to fail

Yeah , they should copy paste answers from stack overflow like real developers.

Real developers just hit tab on whatever copilot tells them to

Guilty

deleted by creator

You sound like someone who doesn’t know how to program and is allowing yourself to become hopelessly dependent on corporations to be able to do anything.

deleted by creator

Removed by mod

Ty for this great pasta moment

“What the fuck did you just fucking say about me, you little bitch? I’ll have you know I’ve authored multiple FOSS libraries, and I’ve been involved in numerous secret application deployments on Production and I have over 300 confirmed commits. I am…”

I do so try

I work in AI, and I think AI is overrated.