Or even like modern wifi. I saw a vacuum with wifi capabilities. Do I really need to check my vacuum battery level from my phone?

If you don’t pay your monthly vacuum fee, Hoover will turn it off remotely.

Unlock more power for an extra $4.99/month*

*warranty period reduced by 1 week per use of MaxPower mode

Hahaha

There’s a real life example of this somewhere right?

Time to wrap my vacuum in tin foil

I saw a Bluetooth toothbrush that send reports to your phone on how good you brushed your teeth, like wtf?!

It also send reports to they corporate overlords. Most probably anyway.

Oh I’m sure your health insurance would love to know the condition of your teeth to increase your rates.

Missed cleaning routine detected January 5, 2024: claim denied

EXACTLY! Hubby has one of those, that function got old pretty quickly.

TBH I use that to make sure my kids brush their teeth before the electronics get Internet in the morning.

Or to make sure they learn hacking skills. Win or win!

Your internet is solar powered?

My home assistant picked up my toothbrush, forgot it had that feature*

It does seem silly to me as well, but is it really any different from people who want personal data for their sleep habits or exercise habits?

Saw a “smart” steam ironing board.

I’ll call it smart when it will iron my shirts!

There are a few that do that but feel gimmicky. It looks like the upper half of a dummy and throws vapor to wrinkle out the shirt.

Yes, I’ve considered it in the past.

Those things have been around forever and work very well. For domestic use it’s probably only worth it if you have a lot of shirts.

Adding Bluetooth to a vacuum cleaner does make it suck more.

Well, this is something that I actually used. I have a robo vacuum. I was preparing my home for some guests once, when I saw that the vacuum wasn’t charged fully (because it was mispositioned on its base). I put it to the right spot, let it charge for half an hour, started it and left to buy groceries.

At the store, I checked the app where I have my apartment mapped by the vacuum that shows its route and cleaning progress. And I saw that with the current charge, it will have to go back, charge and continue. So I set it from “max” power to “normal”, to let it at least finish the job.

It is a cool and useful thingThis was not an automated model of vacuum. Connection features for automated models makes sense.

Ah, ok, then yes. If it’s just an indicator on the vacuum against “indicator in an app + register + give us all your data+ “buy vacuum 2.0” notifications”, then fuck them

Yes? Maybe the battery was left uncharged, or used up, so you’re waiting to do more cleaning. Why shouldn’t you be able to check?

I have an automation in my Home Assistant setup to notify me when batteries need to be replaced or charged. Currently it’s only for the smart devices in that deployment, but yes. I want my home automation to keep track of all batteries, so I can see status at a glance and be reminded if one needs attention

Or i could just plug my vacuum in when I’m done with it.

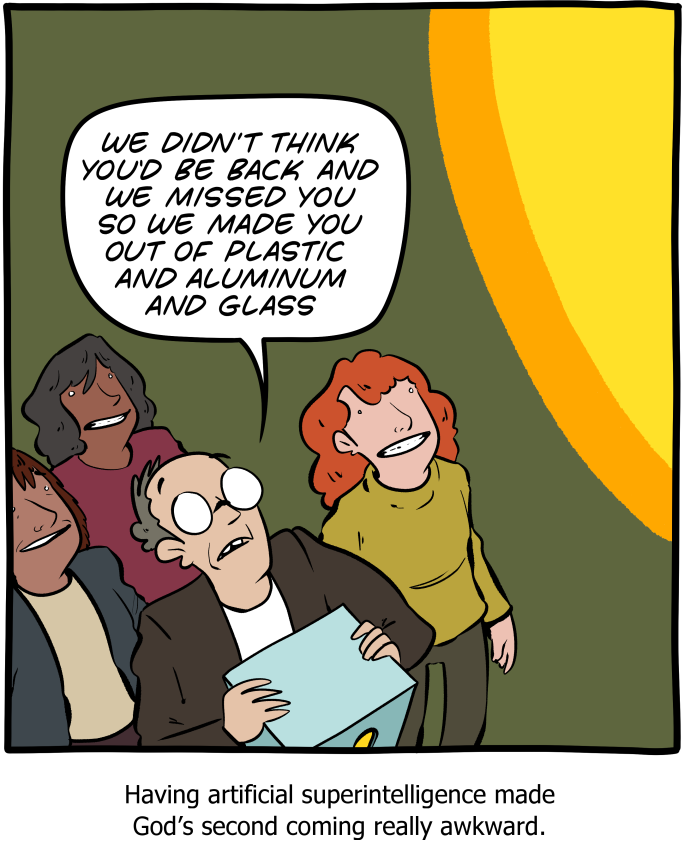

AI isn’t a product for consumers, its a product for investors. If somewhere down the line a consumer benefits in some way, that’s just a side effect.

Think about the ways that information tech has revolutionized our ability to do things. It’s allowed us to do math, produce and distribute news and entertainment, communicate with each other, make our voices heard, organize movements, and create and access pornography at rates and in ways that humanity could only have dreamed of only a few decades ago.

Now consider that AI is first and foremost a technology predicated on reappropriating and stealing credit for another person’s legitimate creative work.

Now imagine how much of humanity’s history has had that kind of exploitation at the forefront of its worst moments, and consider what might lie ahead with those kind of impulses being given the rocket fuel of advanced information technology.

deleted by creator

This is so spot on. I use AI all the time, but the hype and “we should AI all the things” is ridiculous.

I blame it on bullshit jobs. Too many people have to come up with weekly nonsense busywork tasks just to justify themselves. Also the usual FOMO. “Guys, we can’t fall behind the competition on this!”

Yep. I have middle management above me gleefully cheering the fact that ChatGPT can write their reports for them now. Well guess what, it can write those reports for me, the actual person doing the real work, and you are now redundant.

As a person with a useless boss who does almost nothing and (of course) gets paid more than me, I like this take! Let AI report on workers and watch productivity (and profits) soar!

The less ya do, the more they pays ya. It’s so dumb.

As an architect whose job it is to persuade useless bosses to do things the right way or to prioritize their teams to, I love this idea. Let AI take over boss work. They would be so much easier to work with

Like many people, I use it like we used to use Google. Because Google (and most other search engines) suck now, thanks to all the SEO spam out there. My job requires me to be a “jack of all trades” (I am a duct taper with a bullshit job in David Graeber parlance). I have to cover myriad technical things. I usually know the high level way to do things, but I frequently need help with the specifics (rusty on the syntax, etc), so I use the free ChatGPT for that kind of thing. it’s been extremely helpful. I was also the first person to bring it to the attention of my boss ages ago when it first came onto the scene.

Rather predictably, my boss now acts like he discovered (borderline invented it) and is always nagging everyone to use it to get their work done faster.

AI has already put some people out of work and will continue to be disruptive. There will be a lot more layoffs coming, is my guess. And it doesn’t really matter if the AI is good or not. If the C-Suite thinks they can save money and get rid of “lazy workers” they will absolutely 100% do it. We’ve seen over and over again how customer service and product quality hardly even matter any more.

I appreciate your take on it: replace the useless middle manager whip-cracker types. Hopefully we see a lot of that…

My company did a big old round of AI layoffs and now it’s barely functional at all because it got rid of a bunch of people actually doing things and kept all of the loud idiots.

They should have asked their AI for guidance on layoffs, LoL

I work for a fairly big IT company. They’re currently going nuts about how generative AI will change everything for us and have been for the last year or so. I’m yet to see it actually be used by anyone.

I imagine the new Microsoft Office copilot integration will be used only slightly more than Clippy was back in the day.

But hey, maybe I’m just an old man shouting at the AI powered cloud.

Copilot is often a brilliant autocomplete, that alone will save workers plenty of time if they learn to use it.

I know that as a programmer, I spend a large percentage of my time simply transcribing correct syntax of whatever’s in my brain to the editor, and Copilot speeds that process up dramatically.

problem is when the autocomplete just starts hallucinating things and you don’t catch it

If you blindly accept autocompletion suggestions then you deserve what you get. AIs aren’t gods.

AI’s aren’t god’s.

Probably will happen soon.

OMG thanks for being one of like three people on earth to understand this

you don’t catch it

That’s on you then. Copilot even very explicitly notes that the ai can be wrong, right in the chat. If you just blindly accept anything not confirmed by you, it’s not the tool’s fault.

I feel like the process of getting the code right is how I learn. If I just type vague garbage in and the AI tool fixes it up, I’m not really going to learn much.

Autocomplete doesn’t write algorithms for you, it writes syntax. (Unless the algorithm is trivial.) You could use your brain to learn just the important stuff and let the AI handle the minutiae.

Where “learn” means “memorize arbitrary syntax that differs across languages”? Anyone trying to use copilot as a substitute for learning concepts is going to have a bad time.

AI can help you learn by chiming in about things you didn’t know you didn’t know. I wanted to compare images to ones in a dataset, that may have been resized, and the solution the AI gave me involved blurring the images slightly before comparing them. I pointed out that this seemed wrong, because won’t slight differences in the files produce different hashes? But the response was that the algorithm being used was perceptual hashing, which only needs images to be approximately the same to produce the same hash, and the blurring was to make this work better. Since I know AI often makes shit up I of course did more research and tested that the code worked as described, but it did and was all true.

If I hadn’t been using AI, I would have wasted a bunch of time trying to get the images pixel perfect identical to work with a naive hashing algorithm because I wasn’t aware of a better way to do it. Since I used AI, I learned more about what solutions are available, more quickly. I find that this happens pretty often; there’s actually a lot that it knows that I wasn’t aware of or had a false impression of. I can see how someone might use AI as a programming crutch and fail to pay attention or learn what the code does, but it can also be used in a way that helps you learn.

I use AI a lot as well as a SWE. The other day I used it to remove an old feature flag from our server graphs along with all now-deprecated code in one click. Unit tests still passed after, saved me like 1-2 hours of manual work.

It’s good for boilerplate and refactors more than anything

AI bad tho!!!

A friend of mine works in marketing (think “websites for small companies”). They use an LLM to turn product descriptions into early draft advertising copy and then refine from there. Apparently that saves them some time.

It saves a ton of time. I’ve worked with clients before and I’ll put a lorem ipsum as a placeholder for text they’re supposed to provide. Then the client will send me a note saying there’s a mistake and the text needs to be in English. If the text is almost close enough to what the client wants, they might actually read it and send edits if you’re lucky.

I’ve actually pushed products out with lorem ipsum on it because the client never provided us with copy. As you say they seem to think that the copy is in there, but just in some language they can’t understand. I don’t know how they can possibly think that since they’ve never sent any, but if they were bright they wouldn’t work in marketing.

I’m a developer with about 15 years of experience. I got into my company’s copilot beta program.

Now maybe you are some magical programmer that knows everything and doesn’t need stack overflow, but for me it’s all but completely replaced it. Instead of hunting around for a general answer and then applying it to my code, I can ask very explicitly how to do that one thing in my code, and it will auto generate some code that is usually like 90% correct.

Same thing when I’m adding a class that follows a typical pattern elsewhere in my code…well it will auto generate the entire class, again with like 90% of it being correct. (What I don’t understand is how often it makes up enum values, when it clearly has some context about the rest of my code) I’m often shocked as to how well it knew what I was about to do.

I have an exception thats not quite clear to me? Well just paste it into the copilot chat and it gives a very good plain English explanation of what happened and generally a decent idea of where to look.

And this is a technology in it’s infancy. It’s only been released for a little over a year, and it has definitely improved my productivity. Based on how I’ve found it useful, it will be especially good for junior devs.

I know it’s in, especially on lemmy, to shit on AI, but I would highly recommend any dev get comfortable with it because it is going to change how things are done and it’s, even in its current form, a pretty useful tool.

It’s in to shit on AI because it’s ridiculously overhyped, and people naturally want to push back on that. Pretty much everyone agrees it’ll be useful, just not replace all the jobs useful.

And a chunk of the jobs it will replace were on their way out the door anyway. There are already plenty of fast food places with kiosks to order, and they haven’t replaced any specific person, just a small function of one job.

I expect it’ll be useful on the order of magnitude of Google Search, not revolutionary on the scale of the internet. And I think that’s a reasonable amount of credit.

The problem with GenAI is the same as any system. Garbage in equals garbage out. Couple it with no tuning and it’s a disaster waiting to happen. Good GenAI can exist, but you need some serious data science and time to tune it. Right now that puts the cost outside of the “do it by hand” realm (and by quite a bit). LLMs are useful given that they’ve been trained on general human writing patterns, but for a company to be able to replace their functions with highly specific tasks they need to develop and push their own data sets and training which they don’t want to spend the money on.

It will take the market by storm, just like NFT with bored apes.

I’m yet to see it actually be used by anyone.

None of your programmers are using genAI to prototype, analyze errors or debug faster? Either they are seriously missing out or you’re not following.

I think the “AI will revolutionize everything” hype is stupid, but I definitely get a lot of added productivity when coding with it, especially when discovering APIs. I do have to double-check, but overall I’m definitely faster than before. I think it’s good at reducing the mental load of starting a new task too, because you can ask for some ideas and pick what you like from it.Mine aren’t. Because it has been mandated by the execs not to because there is a potential security risk in leaking our code to AI servers.

This. I am stunned to read how many devs are allowed to use a third party tool to send proprietary code to.

There are company agreements to secure that, we are not using the public websites.

My company has an agreement with a genAI provider so the data won’t be leaked, we have an internal website, it’s not the public one. We can also add our own data to the model to get results relevant to the company’s knowledge.

I’d love a boosted Clippy powered by AI! It would have incredible animations while sitting there in corner doing nothing!

I was watching this morning’s WAN podcast (linustechtips) and they had an interview with Jim Keller talking mostly about AI.

The portions about AI felt like he was living in an alternate universe, predicting AI will be used literally everywhere.

My bullshit-o-meter hit the stars but comments on the video seem positive 🤔

I think it massively depends on what your job is. I know quite a lot of people at work use AI to to draft out documents, it’s a good way to get started. I also suspect that quite a lot of documents are 100% AI since we have a lot of stuff that we write but no one ever reads, so what’s the point in putting effort in?

I tried to use it to write some documentation for various processes at work but the AI doesn’t know about our processes and I couldn’t figure out a way to tell it about our processes and so it either missed steps or just made stuff up so for me it’s not really useful.

So it works as long as you don’t need anything too custom. But then we have engineers that go out to businesses and presumably they don’t use AI for anything because there’s nothing it can do that would be useful for them.

So right there in one business you have three groups of people, people who use AI a lot, people who’ve tried to use AI and don’t find it useful, and people who basically have no use for AI.

I have one guy using AI to generate a status report by compiling all his report’ statuses!

I’m hoping to be one of the people to benefit, if Security would approve AI. As a DevOps guy, I’m continually jumping among programming and scripting languages and it sometimes takes a bit to change context. If I’m still in Python mode, why shouldn’t I get a jump by AI translating to Java, or Groovy, or Go, or PowerShell, or whatever flavor of shell script? As the new JavaScript “expert” at my company, why can’t I continue avoiding Learning JavaScript?

So you are saying that AI today is like Bluetooth today

I use Bluetooth all the time for speakers and headsets, also the PlayStation 3 controller was Bluetooth, so would that not mean AI will be a top of the line tool in 2 years? I personally don’t use it for anything at the moment, but in 2003 Plantronics released Bluetooth headsets for corporate environments (IP phones usually still used to this day).

Seems like more of a we aren’t sure where this tool is most useful yet, but it will be used by many people around us.

That’s very fair. I don’t like how unpredictable Bluetooth is when you have multiple peripherals and multiple hosts paired to eachother and all within range of eachother.

Having two pairs of AirBudz, one AirBudz pro, and an Anker speaker attached via BT to my phone and all of them function exactly when I want perfectly… Bluetooth works amazingly.

You never have audio coming out of a device you didn’t expect?

Nope! The budz only produce audio when they’re in my ears or my partner’s. I can select to mirror audio to both of us at the same time when we’re exercising. The speaker only works when I turn it on, but it connects immediately every time. I suppose I forgot about my car, which works but has a delay cuz the infotainment system kinda sucks.

Say what you want about bluetooth, but I’m amazed by the battery life of those devices

I remember seeing a DankPods video about a rice cooker with quote-unquote “AI rice” technology. Spoiler alert: there is no AI in there.

So… it’s not even putting it in something where it’s not useful, it’s straight up false advertising.

a simple “if” “then” algorithm

Corporate: (☞゚ヮ゚)☞ Is this AI?

It’s usually an entirely mechanical timer with a spool or a simple sensor that shuts the heating when the water is gone. No coding required.

Imo, that’s coding, just analog LOL

“If” Sensor reached temperature. -“then” Cut power.

Disclaimer: I have ZERO coding knowledge of any kind.

It depends. If there is a component that evaluates the sensor status through some form of runtime and then regulates the temperature based on that, you could call it coding (I don’t think this is ever done since it has no practical use). Else, it’s just system architecture.

Of course, there is some overlap within those areas because they both rely on logic, but the latter would not be considered coding.

If you study CS, you will most likely have a course that gives you a basic idea about system architecture and if you study engineering, you will probably have to code some small thing or at least have a course on the basics. So yeah, not entirely distinct.

Give me Bluetooth rice or give me death

Bluetooth rice should be blue and also should make your teeth blue (because blue tooth, get it?)

I suck at comedy.

They’ve been claiming things like rice cookers had AI for decades, so at least this isn’t part of the current AI hype.

Oh right.

No no, they mean “artificially interesting” rice

“Now that’s what I call rice”

-Tefal’s marketing team, I guess

Or like the blockchain 5 years ago

Or like VR 10 years ago

Or like 3D 15 years ago

It is the hot new thing that you have to use for the VCs to fund your company and for investors to buy your stocks, regardless of the actual utility. AI does seem to have at least more possibilities of usage than those technologies, but it also have an incredibly higher possibility of misuse that is being completely ignored by these companies

It was always clear that VR, 3D, blockchain were fads. But AI is already useful as is. The hype may not be as high in the future but AI is here to stay.

VR is also around, it’s possibly the most popular it’s ever been. It’s still a small niche compared to its initial promise.

Linux on desktop is more popular than VR…

But that’s just because

200120022003200420052006200720082009201020112012201320142015201620172018201920202021202220232024 is the year of the Linux desktop!By the news I’m seeing about windows and its new features that is bloated and restricting its users. That day is not long before its the year of linux desktop.

the news I’m seeing about windows and its new features that is bloated and restricting its users.

You sound like every Linux user for the last 30 years.

… I use Arch btw

Almost all my colleagues are using genAI every day, I don’t know anyone using VR regularly.

deleted by creator

But how will we provide an enhanced, metaverse 3D AR blockchain-backed cavity reduction without an LLM?

Nobody used blockchain besides scam artists and money laundering schemes. VR was a super niche toy and was not shoved into anything. 3D was… Okay you have a point with that one, but AI can actually be pretty useful where it’s actually useful.

Most people now use chat gpt to some extent voluntarily, without it being shoehorned into an otherwise unrelated product. My mom told me how she was using it to help her rewrite her resume just the other day. I agree that there’s a fad of it being forced into everything that doesn’t need it, but i think it’s here to stay.

Also, agree to disagree on it having an “incredibly higher possibility of misuse”. It’s just a tool to let people do things they want to do, whether their intentions are good or not.

Or like 4D 20 years ago

Or 5D 30 years ago

In all honesty, it seems like they’ve been trying to make 3D happen every ten to fifteen years since the 1950s. And they tried making VR a thing in the 80s and 90s, too until it went to sleep for a little while.

I remember wanting one of these so bad when they came out. I even got to play one at circuit City once time.

I played one at… I want to say Wal-mart (or maybe K-mart?). The demo station was on display for maybe a year, but it was never working except for one glorious time I got to play… uh, something, I think either tennis or the Wario platformer. Clearly the game didn’t stick in my head, but the overall experience was amazing.

deleted by creator

That sounds cool, I don’t have a smart home setup, but Bluetooth sounds kinda nice to me for changing the temperature on the thermostat in the house, car not so much. Now I do know many people who use Bluetooth to cast their phone calls to their hands free devices in cars, as well as to hook up those diagnostic tools and have the error codes go to your phone instead of buying a product that costs hundreds of dollars to have a screen you would only use for that one purpose.

deleted by creator

Freon, or it used to be

deleted by creator

This reminds me I’m into season 5 of Burn Notice and Sam said at one point, “I’m on Bluetooth if you need me”. It was a weird reminder that once upon a time people were paid to advertise just… Bluetooth, because that’s a brand name. These days it’s just everywhere.

The product placements in that show are not exactly subtle. Excellent show though, I did not expect it to hold up so well.

So. You would have to be what 5 meters max to talk to him? What does that even mean?

Boomers learned what Bluetooth was because they started making AirPod-style single ear headsets for cell phones. Everyone called them “a Bluetooth”.

So if you said “I’m on Bluetooth” it means you’d have your big clunky EarPod on, ready to answer a call at a moments notice.

A former fucking spy wouldn’t be caught dead using early Bluetooth for sensitive conversations though (and probably not current BT either). Considering every other segment of that show is a “here’s a hack to show how fragile the house of cards of modern society is, and how spies just navigate through it with impunity”, it’s pretty funny they leaned into this one.

It was Sam not Michael. That’s exactly the kind of dumb shit Sam would do.

Well they use little bluetooth style earpieces all the time to talk to each other, but this one time he name-checks it very awkwardly. I think they just assume anybody listening would have to target their comms specifically, and most of the time they’re relying on obscurity. I don’t think it would make sense for most of their targets to listen out for every bluetooth dongle that enters the building or whatever.

They also don’t have government resources so they have to make do with what they can get their hands on, which is a running theme. One of the times he’s working for the government they specifically call out that his earpiece is so deep in his ear canal there’s no way anybody will overhear what’s being said to him.

You sure they didn’t mean it like “put it on a USB?” As in, they use the name of the connectivity technology to imply a single class of product that might use it?

That’s exactly what they meant, it’s just a weird way to say it that they only used because the consortium payed them to

Edit: extra word removed

Didn’t they say “bing it” at one point CSI or something

Apparently that was Hawaii 5-0. My favourite CSI moment though was the huge ad for Microsoft Photosynth

Here is an alternative Piped link(s):

https://piped.video/0suot89qXY4

Piped is a privacy-respecting open-source alternative frontend to YouTube.

I’m open-source; check me out at GitHub.

I did a rewatch not too long ago. Yeah the paid advertising is a bit cringe now but the show itself holds up very well.

I am currently in season 6 and also laughed about that line. Still a good show (although the b-roll scenes are also often quite cringe these days)

Like bluetooth. So, not particularly good even for the applications it’s supposed to be used for

Almost a good take. Except that AI doesn’t exist on this planet, and you’re likely talking about LLMs.

In 2022 AI evolved into AGI and LLM into AI. Languages are not static as shown by old English. Get on with the times.

Changes to language to sell products are not really the language adapting but being influenced and distorted

People have used ai to describe things like chatbots, video game bots, etc for a very long time. Don’t no true Scotsman the robots.

You are unfortunately wrong on this one. The term “AI” has been used to describe things other than AGI since basically the invention of computers that could solve problems. The people that complain about using “AI” to describe LLMs are actually the ones trying to change language.

https://en.m.wikipedia.org/wiki/Artificial_intelligence#History

I think the modern pushback comes from people who get their understanding of technology from science fiction. SF has always (mis)used AI to mean sapient computers.

LLMs are a way of developing an AI. There’s lots of conspiracy theories in this world that are real it’s better to focus on them rather than make stuff up.

There really is an amazing technological development going on and you’re dismissing it on irrelevant semantics

The acronym AI has been used in game dev for ages to describe things like pathing and simulation which are almost invariably algorithms (such as A* used for autonomous entities to find a path to a specific destination) or emergent behaviours (which are also algorithms were simple rules are applied to individual entities - for example each bird on a flock - to create a complex whole from many such simple agents, and example of this in gamedev being Steering Behaviours, outside gaming it would be the Game Of Life).

They didn’t so much “evolve” as AI scared the shit out of us at such a deep level we changed the definition of AI to remain in denial about the fact that it’s here.

Since time immemorial, passing a Turing test was the standard. As soon as machines started passing Turing tests, we decided Turing tests weren’t such a good measure of AI.

But I haven’t yet seen an alternative proposed. Instead of using criteria and tasks to define it, we’re just arbitrarily saying “It’s not AGI so it’s not real AI”.

In my opinion, it’s more about denial than it is about logic.

You’re using AI to mean AGI and LLMs to mean AI. That’s on you though, everyone else knows what we’re talking about.

Words have meanings. Marketing morons are not linguists.

artificial intelligence noun

1 : the capability of computer systems or algorithms to imitate intelligent human behavior

also, plural artificial intelligences : a computer, computer system, or set of algorithms having this capability

2 : a branch of computer science dealing with the simulation of intelligent behavior in computers

https://www.merriam-webster.com/dictionary/artificial intelligence

As someone who still says a kilobyte is 1024 bytes, i agree with your sentiment.

Amen. Kibibytes my ass ;)

Words might have meanings but AI has been used by researchers to refer to toy neutral networks longer than most people on Lemmy have been alive.

This insistence that AI must refer to human type intelligence is also such a weird distortion of language. Intelligence has never been a binary, human level indicator. When people say that a dog is intelligent, or an ant hive shows signs of intelligence, they don’t mean it can do what a human can. Why should AI be any different?

You honestly don’t seem to understand. This is not about the extent of intelligence. This is about actual understanding. Being able to classify a logical problem / a thought into concepts and processing it based on properties of such concepts and relations to other concepts. Deep learning, as impressive as the results may appear, is not that. You just throw a training data at a few billion “switches” and flip switches until you get close enough to a desired result, without being able to predict how the outcome will be if a tiny change happens in input data.

I mean that’s a problem, but it’s distinct from the word “intelligence”.

An intelligent dog can’t classify a logic problem either, but we’re still happy to call them intelligent.

With regards to the dog & my description of intelligence, you are wrong: Based on all that we know and observe, a dog (any animal, really) understands concepts and causal relations to varying degrees. That’s true intelligence.

When you want to have artificial intelligence, even the most basic software can have some kind of limited understanding that actually fits this attempt at a definition - it’s just that the functionality will be very limited and pretty much appear useless.

Think of it this way: deterministic algorithm -> has concepts and causal relations (but no consciousness, obviously), results are predictable (deterministic) and can be explained deep learning / neural networks -> does not implicitly have concepts nor causal relations, results are statistical (based on previous result observations) and can not be explained -> there’s actually a whole sector of science looking into how to model such systems way to a solution Addition: the input / output filters of pattern recognition systems are typically fed through quasi-deterministic algorithms to “smoothen” the results (make output more grammatically correct, filter words, translate languages)

If you took enough deterministic algorithms, typically tailored to very specific problems & their solutions, and were able to use those as building blocks for a larger system that is able to understand a larger part of the environment, then you would get something resembling AI. Such a system could be tested (verified) on sample data, but it should not require training on data.

Example: You could program image recognition using math to find certain shapes, which in turn - together with colour ranges and/or contrasts - could be used to associate object types, for which causal relations can be defined, upon which other parts of an AI could then base decision processes. This process has potential for error, but in a similar way that humans can mischaracterize the things we see - we also sometimes do not recognize an object correctly.

Op is an idiot though hope we can agree with that one.

Telling everyone else how they should use language is just an ultimately moronic move. After all we’re not French, we don’t have a central authority for how language works.

Telling everyone else how they should use language is just an ultimately moronic move. After all we’re not French, we don’t have a central authority for how language works.

There’s a difference between objecting to misuse of language and “telling everyone how they should use language” - you may not have intended it, but you used a straw man argument there.

What we all should be acutely aware of (but unfortunately many are not) is how language is used to harm humans, animals or our planet.

Fascists use language to create “outgroups” which they then proceed to dehumanize and eventually violate or murder. Capitalists speak about investor risks to justify return on invest, and proceed to lobby for de-regulation of markets that causes human and animal suffering through price gouging and factory farming livestock. Tech corporations speak about “Artificial Intelligence” and proceed to persuade regulators that - because there’s “intelligent” systems - this software may be used for autonomous systems that proceed to cause injury and death on malfunctions.

Yes, all such harm can be caused by individuals in daily life - individuals can be murderers or extort people on something they really need, or a drunk driver can cause an accident that kills people. However, the language that normalizes or facilitates such atrocities or dangers on a large scale, is dangerous and therefore I will proceed to continue calling out those who want to label the shitty penny market LLMs and other deep learning systems as “AI”.

I’ve given up trying to enforce the traditional definitions of “moot”, “to beg the question”, “nonplussed”, and “literally” it’s helped my mental health. A little. I suggest you do the same, it’s a losing battle and the only person who gets hurt is you.

Nobody has yet met this challenge:

Anyone who claims LLMs aren’t AGI should present a text processing task an AGI could accomplish that an LLM cannot.

Or if you disagree with my

Oops accidentally submitted. If someone disagrees with this as a fair challenge, let me know why.

I’ve been presenting this challenge repeatedly and in my experience it leads very quickly to the fact that nobody — especially not the experts — has a precise definition of AGI

While they are amazingly effective at many problems we throw at them, I’m not convinced that they’re generally intelligent. What I do know is that in their current form, they are not tractable systems for anything but relatively small problems since compute and memory costs increase quadratically with the number of steps.

https://arxiv.org/abs/2303.12712 has a good take on this question

“Write an essay on the rise of ai and fact check it.”

“Write a verifiable proof of the four colour problem”

“If p=np write a python program demonstrating this, else give me a high-level explanation why it is not true.”

This is such a half brained response. Yes “actual” AI in the form of simulated neurons is pretty far off, but it’s fairly obvious when people say they AI they mean LLMs and other advanced forms of computing. There’s other forms of AI besides LLMs anyways, like image analyzers

The only thing half-brained is the morons who advertise any contemporary software as “AI”. The “other forms” you mention are machine learning systems.

AI contains the word “intelligence”, which implies understanding. A bunch of electrons manipulating a bazillion switches following some trial-and-error set of rules until the desired output is found is NOT that. That you would think the term AI is even remotely applicable to any of those examples shows how bad the brain rot is that is caused by the overabundant misuse of the term.

I bet you were a lot of fun when smartphones first came out

Yes. And a cocktail is not a real cock tail. Thank God.

What do you call the human brain then, if not billions of “switches” as you call them that translate inputs (senses) into an output (intelligence/consciousness/efferent neural actions)?

It’s the result of billions of years of evolutionary trial and error to create a working structure of what we would call a neural net, which is trained on data (sensory experience) as the human matures.

Even early nervous systems were basic classification systems. Food, not food. Predator, not predator. The inputs were basic olfactory sense (or a more primitive chemosense probably) and outputs were basic motor functions (turn towards or away from signal).

The complexity of these organic neural networks (nervous systems) increased over time and we eventually got what we have today: human intelligence. Although there are arguably different types of intelligence, as it evolved among many different phylogenetic lines. Dolphins, elephants, dogs, and octopuses have all been demonstrated to have some form of intelligence. But given the information in the previous paragraph, one can say that they are all just more and more advanced pattern recognition systems, trained by natural selection.

The question is: where do you draw the line? If an organism with a photosensitive patch of cells on top of its head darts in a random direction when it detects sudden darkness (perhaps indicating a predator flying/swimming overhead, though not necessarily with 100% certainty), would you call that intelligence? What about a rabbit, who is instinctively programmed by natural selection to run when something near it moves? What about when it differentiates between something smaller or bigger than itself?

What about you? How will you react when you see a bear in front of you? Or when you’re in your house alone and you hear something that you shouldn’t? Will your evolutionary pattern recognition activate only then and put you in fight-or-flight? Or is everything you think and do a form of pattern recognition, a bunch of electrons manipulating a hundred billion switches to convert some input into a favorable output for you, the organism? Are you intelligent? Or just the product of a 4-billion year old organic learning system?

Modern LLMs are somewhere in between those primitive classification systems and the intelligence of humans today. They can perform word associations in a semantic higher dimensional space, encoding individual words as vectors and enabling the model to attribute a sort of meaning between two words. Comparing the encoding vectors in different ways gets you another word vector, yielding what could be called an association, or a scalar (like Euclidean or angular distance) which might encode closeness in meaning.

Now if intelligence requires understanding as you say, what degree of understanding of its environment (ecosystem for organisms, text for LLM. Different types of intelligence, paragraph 4) does an entity need for you to designate it as intelligent? What associations need it make? Categorizations of danger, not danger and food, not food? What is the difference between that and the Pavlovian responses of a dog? And what makes humans different, aside from a more complex neural structure that allows us to integrate orders of magnitude more information more efficiently?

Where do you draw the line?

A consciousness is not an “output” of a human brain. I have to say, I wish large language models didn’t exist, because now for every comment I respond to, I have to consider whether or not a LLM could have written that :(

In effect, you compare learning on training data: “input -> desired output” with systematic teaching of humans, where we are teaching each other causal relations. The two are fundamentally different.

Also, you are questioning whether or not logical thinking (as opposed to throwing some “loaded” neuronal dice) is even possible. In that case, you may as well stop posting right now, because if you can’t think logically, there’s no point in you trying to make a logical point.

systematic teaching of humans, where we are teaching each other causal relations. The two are fundamentally different.

So you mean that a key component to intelligence is learning from others? What about animals that don’t care for their children? Are they not intelligent?

What about animals that can’t learn at all, wheere their barains are completely hard wired from birth. Is that not intelligence?

You seem to be objecting that OPs questions are too philosophical. The question “what is intelligence” can only be solved by philosophical discussion, trying to break it down into other questions. Why is the question about the “brain as a calculator” objectionable? I think it may be uncomfortable for you to even speak of but that would only be an indicator that there is something to it.

It would indeed throw your world view upside down if you realised that you are also just a computer made of flesh and all your output is deterministic, given the same input.

So you mean that a key component to intelligence is learning from others? What about animals that don’t care for their children? Are they not intelligent?

You contradict yourself, the first part of your sentence getting my point correctly, and the second questioning an incorrect understanding of my point.

What about animals that can’t learn at all, wheere their barains are completely hard wired from birth. Is that not intelligence?

Such an animal does not exist.

It would indeed throw your world view upside down if you realised that you are also just a computer made of flesh and all your output is deterministic, given the same input.

That’s a long way of saying “if free will didn’t exist”, at which point your argument becomes moot, because I would have no influence over what it does to my world view.

My main point is that falsifying a hypothesis based on how it makes you feel is not very productive. You just repeated it again. You seem to get mad by just posing the question.

A consciousness is not an “output” of a human brain.

Fair enough. Obviously consciousness is more complex than that. I should have put “efferent neural actions” first in that case, consciousness just being a side effect, something different yet composed of the same parts, an emergent phenomenon. How would you describe consciousness, though? I wish you would offer that instead of just saying “nuh uh” and calling me chatGPT :(

Not sure how you interpreted what I wrote in the rest of your comment though. I never mentioned humans teaching each other causal relations? I only compared the training of neural networks to evolutionary principles, where at one point we had entities that interacted with their environment in fairly simple and predictable ways (a “deterministic algorithm” if you will, as you said in another comment), and at some later point we had entities that we would call intelligent.

What I am saying is that at some point the pattern recognition “trained” by evolution (where inputs are environmental distress/eustress, and outputs are actions that are favorable to the survival of the organism) became so advanced that it became self-aware (higher pattern recognition on itself?) among other things. There was a point, though, some characteristic, self-awareness or not, where we call something intelligence as opposed to unintelligent. When I asked where you draw the line, I wanted to know what characteristic(s) need to be present for you to elevate something from the status of “pattern recognition” to “intelligence”.

It’s tough to decide whether more primitive entities were able to form causal relationships. When they saw predators, did they know that they were going to die if they didn’t run? Did they at least know something bad would happen to them? Or was it just a pre-programmed neural response that caused them to run? Most likely the latter.

Based on all that we know and observe, a dog (any animal, really) understands concepts and causal relations to varying degrees. That’s true intelligence.

From another comment, I’m not sure what you mean by “understands”. It could mean having knowledge about the nature of a thing, or it could mean interpreting things in some (meaningful) way, or it could mean something completely different.

To your last point, logical thinking is possible, but of course humans can’t do it on our own. We had to develop a system for logical thinking (which we call “logic”, go figure) as a framework because we are so bad at doing it ourselves. We had to develop statistical methods to determine causal relations because we are so bad at doing it on our own. So what does it mean to “understand” a thing? When you say an animal “understands” causal relations, do they actually understand it or is it just another form of pattern recognition (why I mentioned pavlov in my last comment)? When humans “understand” a thing, do they actually understand, or do we just encode it with the frameworks built on pattern recognition to help guide us? A scientific model is only a model, built on trial and error. If you “understand” the model you do not “understand” the thing that it is encoding. I know you said “to varying degrees”, and this is the sticking point. Where do you draw the line?

When you want to have artificial intelligence, even the most basic software can have some kind of limited understanding that actually fits this attempt at a definition - it’s just that the functionality will be very limited and pretty much appear useless. […] You could program image recognition using math to find certain shapes, which in turn - together with colour ranges and/or contrasts - could be used to associate object types, for which causal relations can be defined, upon which other parts of an AI could then base decision processes. This process has potential for error, but in a similar way that humans can mischaracterize the things we see - we also sometimes do not recognize an object correctly.

I recognize that you understand the point I am trying to make. I am trying to make the same point, just with a different perspective. Your description of an “actually intelligent” artificial intelligence closely matches how sensory data is integrated in the layers of the visual cortex, perhaps on purpose. My question still stands, though. A more primitive species would integrate data in a similar, albeit slightly less complex, way: take in (visual) sensory information, integrate the data to extract easier-to-process information such as brightness, color, lines, movement, and send it to the rest of the nervous system for further processing to eventually yield some output in the form of an action (or thought, in our case). Although in the process of integrating, we necessarily lose information along the way for the sake of efficiency, so what we perceive does not always match what we see, as you say. Image recognition models do something similar, integrating individual pixel information using convolutions and such to see how it matches an easier-to-process shape, and integrating it further. Maybe it can’t reason about what it’s seeing, but it can definitely see shapes and colors.

You will notice that we are talking about intelligence, which is a remarkably complex and nuanced topic. It would do some good to sit and think deeply about it, even if you already think you understand it, instead of asserting that whoever sounds like they might disagree with you is wrong and calling them chatbots. I actually agree with you that calling modern LLMs “intelligent” is wrong. What I ask is what you think would make them intelligent. Everything else is just context so that you understand where I’m coming from.

I had a bunch of sections of your comment that I wanted to quote, let’s see how much I can answer without copy-pasting too much.

First off, my apologies, I misunderstood your analogy about machine learning not as a comparison towards evolution, but towards how we learn with our developed brains. I concur that the process of evolution is similar, except a bit less targeted (and hence so much slower) than deep learning. The result however, is “cogito ergo sum” - a creature that started self-reflecting and wondering about it’s own consciousness. And this brings me to humans thinking logically: As such a creature, we are able to form logical thoughts, which allow us to understand causality. To give an example of what I mean: Humans (and some animals) did not need the invention of logic or statistics in order to observe moving objects and realize that where something moves, something has moved it - and therefore when they see an inanimate object move, they will eventually suspect the most likely cause for the move in the direction that the object is coming from. Then, when we do not find the cause (someone throwing something) there, we will investigate further (if curious enough) and look for a cause. That’s how curiosity turns into science. But it’s very much targeted, nothing a deep learning system can do. And that’s kind of what I would also expect from something that calls itself “AI”: a systematic analysis / categorization of the input data for the purpose of processing that the system was built for. And for a general AI, also the ability to analyze phenomena to understand their root cause.

Of course, logic is often not the same as our intuitive thoughts, but we are still able to correct our intuitive assumptions based on outcome, but then understand the actual causal relation (unlike a deep learning system) based on our corrected “model” of whatever we observed. In the end, that’s also how science works: We describe reality with a model, and when we discover a discrepancy, we aim to update the model. But we always have a model.

With regards to some animals understanding objects / causal relations, I believe - beyond having a concept of an object - defining what I mean by “understanding” is not really helpful, considering that the spectrum of intelligence among animals overlaps with that of humans. Some of the more clever animals clearly have more complex thoughts and you can interact with them in a more meaningful way than some of the humans with less developed brains, be it due to infancy, or a disability or psychological condition.

How would you describe consciousness, though? I wish you would offer that instead of just saying “nuh uh” and calling me chatGPT :(

First off, I meant the LLM comment seriously - I am considering already to stop participating in internet debates because LLMs have become so sophisticated that I will no longer be able to know whether I am arguing with a human, or whether some LLM is wasting my precious life time.

As for how to describe consciousness, that’s a largely philosophical topic and strongly linked to whether or not free will exists (IMO), although theoretically it would be possible to be conscious but not have any actual free will. I can not define the “sense of self” better than philosophers are doing it, because our language does not have the words to even properly structure our thoughts on that. I can however, tell you how I define free will:

- assuming you could measure every atom, sub-atomic particle, impulse & spin thereof, energy field and whatever else physical properties there are in a human being and it’s environment

- when that individual moves a limb, you would be able to trace - based on what we know:

- the movement of the limb back to the muscles contracting

- movement of the muscles back to electrical signals in some nerves

- the nerve signals back to some neurons firing in the brain

- if you trace that chain of “causes” further and further, eventually, if free will exists, it would be impossible to find a measurable cause for some “lowest level trigger event”

And this lowest level trigger event - by some researchers attributed to quantum decay - might be / could be influenced by our free will, even if - because we have this “brain lag” - the actual decision happened quite some time earlier, and even if for some decisions, they are hardwired (like reflexes, which can also be trained).

My personal model how I would like consciousness to be: An as-of-yet undiscovered property of matter, that every atom has, but only combined with an organic computer that is complex enough to process and store information would such a property actually exhibit a consciousness.

In other words: If you find all the subatomic particles (or most of them) that made up a person in history at a given point in time, and reassemble them in the exact same pattern, you would, in effect, re-create that person, including their consciousness at that point in time.

If you duplicate them from other subatomic particles with the exact same properties (as far as we can measure) - who knows? Because we couldn’t measure nor observe the “consciousness property”, how would we know if that would be equal among all particles that are equal in the properties we can measure. That would be like assuming atoms of a certain element were all the same, because we do not see chemical differences for other isotopes.

The term has been stolen and redefined . It’s pointless to be pedantic about it at this point.

AI traditionally meant now-mundane things like pathfinding algorithms. The only thing people seem to want Artificial Intelligence to mean is “something a computer can almost do but can’t yet”.

AI is, by definition these days, a future technology. We think of AI as science fiction so when it becomes reality we just kick the can in the definition.

Makes me feel a little better. In 2024 I Can’t get a “Windows ready” Bluetooth dongle to be recognized by my still supported Windows computer.

To be fair, we only know where Bluetooth is useful because we put it in a lot of places where it wasn’t useful

Trial and error isn’t the only way to optimize things… It’s actually one of the worst, the one you use when you have no clue how to proceed

So no, that is not a justification for having done it or continue to do it

Now I wonder if substituting the sugar in my coffee with arsenic would render a delicious new beverage… Only one way to find out!

I’m not talking about trial and error, I’m talking about throwing shit at the wall and seeing what sticks.

There might be good ideas out there that no one could think of until they accidentally get invented

I’m not talking about trial and error, I’m talking about throwing shit at the wall and seeing what sticks.

Is that not trial and error?

It’s kind of… the only way tho

Maybe not arsenic, but lead should work.

Same with AI

Sex joke here

That scene in Better Call Saul with the investment guy permanently on his BT earpiece was such a wave of nostalgia for me, used to see those everywhere in the 2000s with a little blue light on them flashing.

is it just me who hasn’t ever had any bluetooth problems?

Quite possibly. I don’t think I’ve ever had any Bluetooth device work without hiccups. My old earbuds used to disconnect or lose pairing all the time. A couple of game controllers I have only worked intermittently for years. My phone is always losing connection in our car. I’ve ironed out some of the problems, but I’ve never had Bluetooth just work for me.

Bluetooth with mobile devices I’d agree. But my work pc hates Bluetooth devices. Such as refusing to use the correct audio channel with headphones, so I still use wired headphones.

I’ve always felt Windows could be temperament with Bluetooth, especially pre Windows 7. Like XP seemed to be a shitshow for Bluetooth.

Bluetooth audio has always been absolutely awful in windows as far as I recall. Bluetooth in general is super temperamental, I recall fighting with data loggers my first job out of uni that only connected via Bluetooth. Older ones were serial and were actually reliable.

Bluetooth reliability by OS

Android > Ubuntu > iOS (because of the stupid automatic turn back on anti-feature) > Windows > Linux Lite (possibly due to 2009 hardware?)

For real Bluetooth is near flawless in Debian and Mint from my experience, mint is on a 2013 laptop too with no issues to modern speakers.

Personally I prefer hard wire for audio where possible but it’s really convenient for a garage speaker and kitchen speaker

I’ve had bad results on Mac OSX as well.

It’s partly a timing thing, and maybe that puts the issues in the apps. If I am using my headset, it also works in things like Teams and Slack. However audio doesn’t want to switch if I turn in the headset in the app. Even worse, if I turn the headset on right before launching a call. It’s better now that I turn in the headset, then do a slow count to ten before starting a call, but still not reliable

I hate everything wireless when it comes to PC peripherals. They just randomly stop working or have a weird, noticeable lag. I have a 3 bucks WLAN adapter on my RPi that surprisingly works OK, but that’s it.

Bluetooth is like the SpongeBob “repeating then saying something different” meme where you go through the whole annoying pairing process, then it plays through the PC speaker anyway

So you don’t know how to select an audio device on you computer eh?

Why are you personally offended by Bluetooth being bad?

Bluetooth gives me the same sensation as a stove with faulty knobs. It’s like there’s a veil between me and the machine.